Highly Trainable Neural Network Configuration

Patent No. US10984320 (titled "Highly Trainable Neural Network Configuration") was filed by Tesseract Systems Llc on May 1, 2017.

What is this patent about?

’320 is related to the field of computer-based neural networks, specifically addressing the challenge of training very deep networks. Traditional deep neural networks, while theoretically powerful, suffer from optimization difficulties as the number of layers increases. This is due to issues like vanishing gradients, where the error signal diminishes as it propagates back through the network during training, hindering the learning process, especially in early layers.

The underlying idea behind ’320 is to introduce a gating mechanism within each neuron that controls the flow of information. Instead of always applying a non-linear transformation to the input signal, the neuron can selectively allow the original input signal to pass through, unmodified, or pass a transformed version of the input, or a mix of both. This is achieved using transform and carry gates , which determine the weighting between the transformed and non-transformed components.

The claims of ’320 focus on a computer-based method, a neural network architecture, and a computer-readable medium implementing this architecture. The core element is a neuron that receives an input signal, applies a first non-linear transform to produce a 'plain' signal, and then uses two additional non-linear transforms (gates) to produce a 'transform' signal and a 'carry' signal. The neuron then calculates a weighted sum of the original input signal and the 'plain' signal, where the weights are determined by the 'transform' and 'carry' signals.

In practice, this architecture allows for the creation of 'information highways' through the network. By initializing the network to favor the 'carry' behavior, the original input signal can propagate through many layers without significant attenuation. This makes it easier to train very deep networks because the error signal can propagate back more effectively, even through many layers. The transform gate learns to regulate information flow, allowing the network to dynamically choose between transforming the input or simply passing it through.

This approach differs from prior solutions that rely on careful initialization schemes or complex training techniques to overcome the optimization challenges of deep networks. By introducing the gating mechanism, ’320 provides a more robust and flexible way to train networks with virtually arbitrary depth, using standard Stochastic Gradient Descent (SGD) with momentum. This allows for the exploration of deeper architectures and potentially more complex problem-solving capabilities without the limitations imposed by traditional training difficulties.

How does this patent fit in bigger picture?

Technical landscape at the time

In the mid-2010s when ’320 was filed, neural networks were typically implemented using deep architectures, at a time when training very deep networks was non-trivial. Optimization of deep networks was difficult, leading to research on initialization techniques and multi-stage training approaches.

Novelty and Inventive Step

The examiner allowed the claims because amendments made by the applicant overcame previous rejections. Specifically, the examiner was persuaded that the claims, as amended, contained additional elements (applying several non-linear transforms to signals) that were significantly more than the abstract ideas recited in the claims, thus rendering the claims statutory under 35 U.S.C. 101. The examiner also noted that the prior art did not teach the specific transforms and calculations in the context of the methods, neural network, and computer-readable medium as claimed.

Claims

This patent includes 28 claims, with independent claims 1, 15, 26, and 28. The independent claims generally focus on a computer-based method, a computer-based neural network, a computer-readable medium, and another method, all related to facilitating training in a computer-based neural network using non-linear transforms and weighted sums within neurons. The dependent claims generally elaborate on and refine the specifics of the independent claims, adding details and limitations to the method, network, and medium.

Key Claim Terms New

Definitions of key terms used in the patent claims.

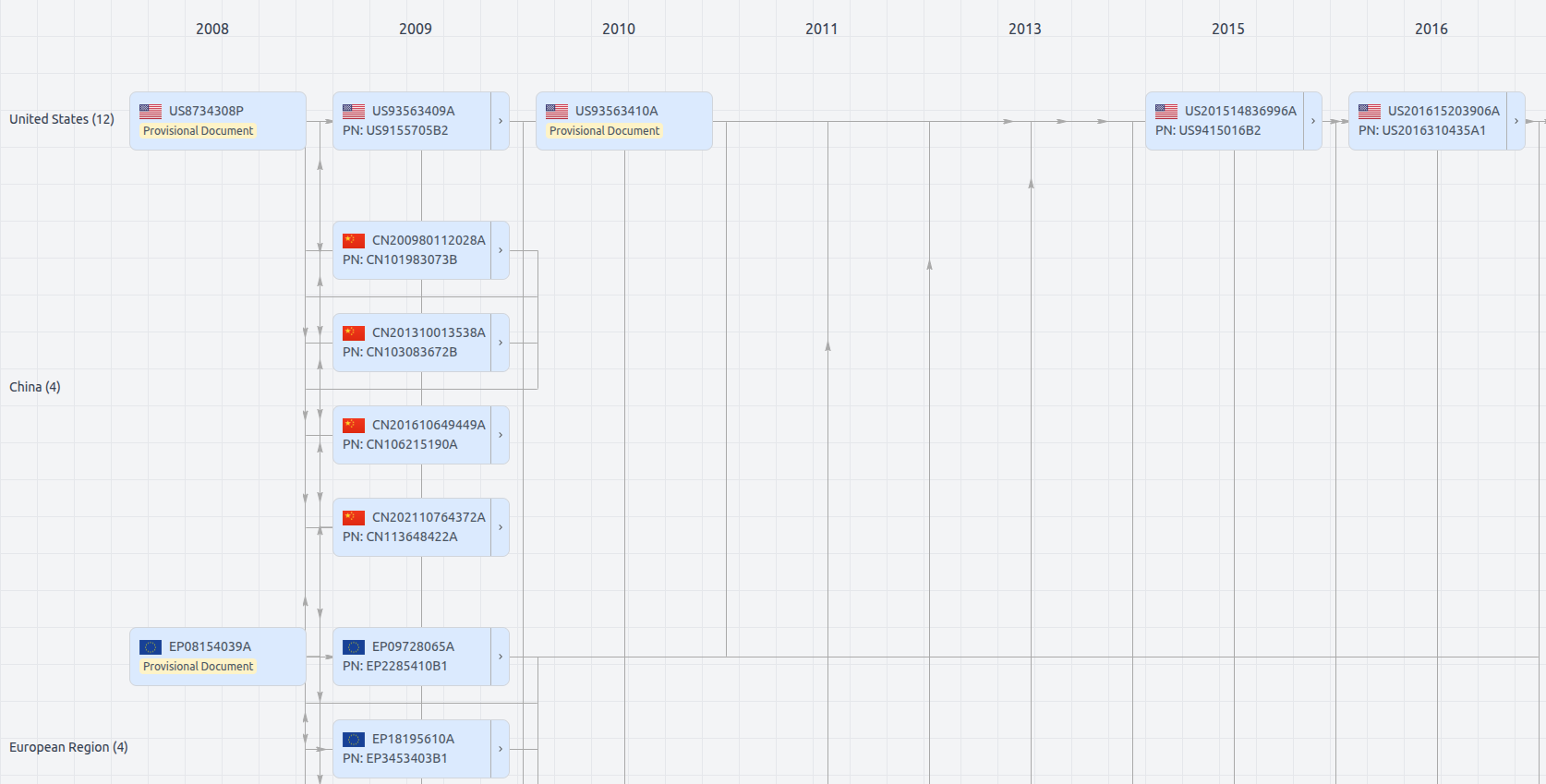

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US10984320

- Application Number

- US15582831

- Filing Date

- May 1, 2017

- Status

- Granted

- Expiry Date

- Jan 20, 2040

- External Links

- Slate, USPTO, Google Patents