Video To Data

Patent No. US11126853 (titled "Video To Data") was filed by Cellular South Inc on Feb 8, 2019.

What is this patent about?

’853 is related to the field of video analysis and metadata generation. Existing systems struggle to efficiently extract meaningful data from video content, often lacking the speed and accuracy needed for real-time applications. Traditional audio-to-text algorithms lack semantic understanding, while image recognition can be computationally intensive and prone to errors. The patent addresses the need for a system that can quickly and accurately generate contextual data from video, including object recognition, audio transcription, and metadata embedding.

The underlying idea behind ’853 is to leverage a distributed processing architecture and fractal-based object recognition to efficiently extract and analyze video content. The system segments the video into smaller chunks, distributes these chunks across multiple processing nodes, and then uses image and audio analysis techniques to identify objects, transcribe speech, and generate metadata. The use of fractals allows for efficient object recognition by comparing images to a compressed representation of known objects, which can be updated over time to improve accuracy.

The claims of ’853 focus on a system comprising a coordinator, a splitter, demultiplexer nodes, an image detector, and an object recognizer. The coordinator manages the overall workflow, the splitter segments the video, the demultiplexer nodes extract audio and still frames, the image detector identifies objects in the frames, and the object recognizer compares these objects to fractal representations to identify them. A key aspect of the claim is the adjustability of the image detector to increase detection of non-primary images in the video, allowing for more detailed analysis.

In practice, the system segments a video into smaller parts and distributes them across multiple demultiplexer nodes for parallel processing. These nodes extract audio and still frames, which are then analyzed by the image detector and object recognizer. The image detector's adjustability allows for fine-tuning the level of detail captured, enabling the system to identify both prominent and subtle objects. The object recognizer compares detected objects to a fractal database, which is continuously updated with new images to improve recognition accuracy. The coordinator then embeds the extracted metadata into the video, creating a content-rich file.

The invention differentiates itself from prior approaches through its distributed architecture, fractal-based object recognition, and adjustable image detection. The distributed architecture enables faster processing by parallelizing the analysis of video content. The use of fractals provides a more efficient way to store and compare object representations compared to traditional image matching techniques. The adjustable image detection allows for capturing more detailed information from the video, including non-primary images that might be missed by other systems. This combination of features results in a system that is both faster and more accurate in generating contextual data from video.

How does this patent fit in bigger picture?

Technical landscape at the time

In the mid-2010s when ’853 was filed, at a time when object recognition was typically implemented using computationally intensive algorithms, and when hardware constraints made real-time video analysis non-trivial. Systems commonly relied on pre-trained models and large datasets for image recognition, and updating these models with new data was a significant challenge.

Novelty and Inventive Step

The examiner approved the application because claim 1 includes an object recognizer that compares an image of an object to a fractal. The fractal includes a representation of the object based on landmarks, and the recognizer updates the fractal with the image.

Claims

This patent contains 11 claims, with claim 1 being the only independent claim. Independent claim 1 is directed to a system for generating data from a video by segmenting the video, extracting audio and still frames, detecting images of objects, and comparing the images to a fractal representation. The dependent claims generally elaborate on the components and functionalities of the system described in independent claim 1, adding details regarding the coordinator, recognizer, geometric features, skin textures, three-dimensional models, processors, cameras, metadata embedding, and metadata streams.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

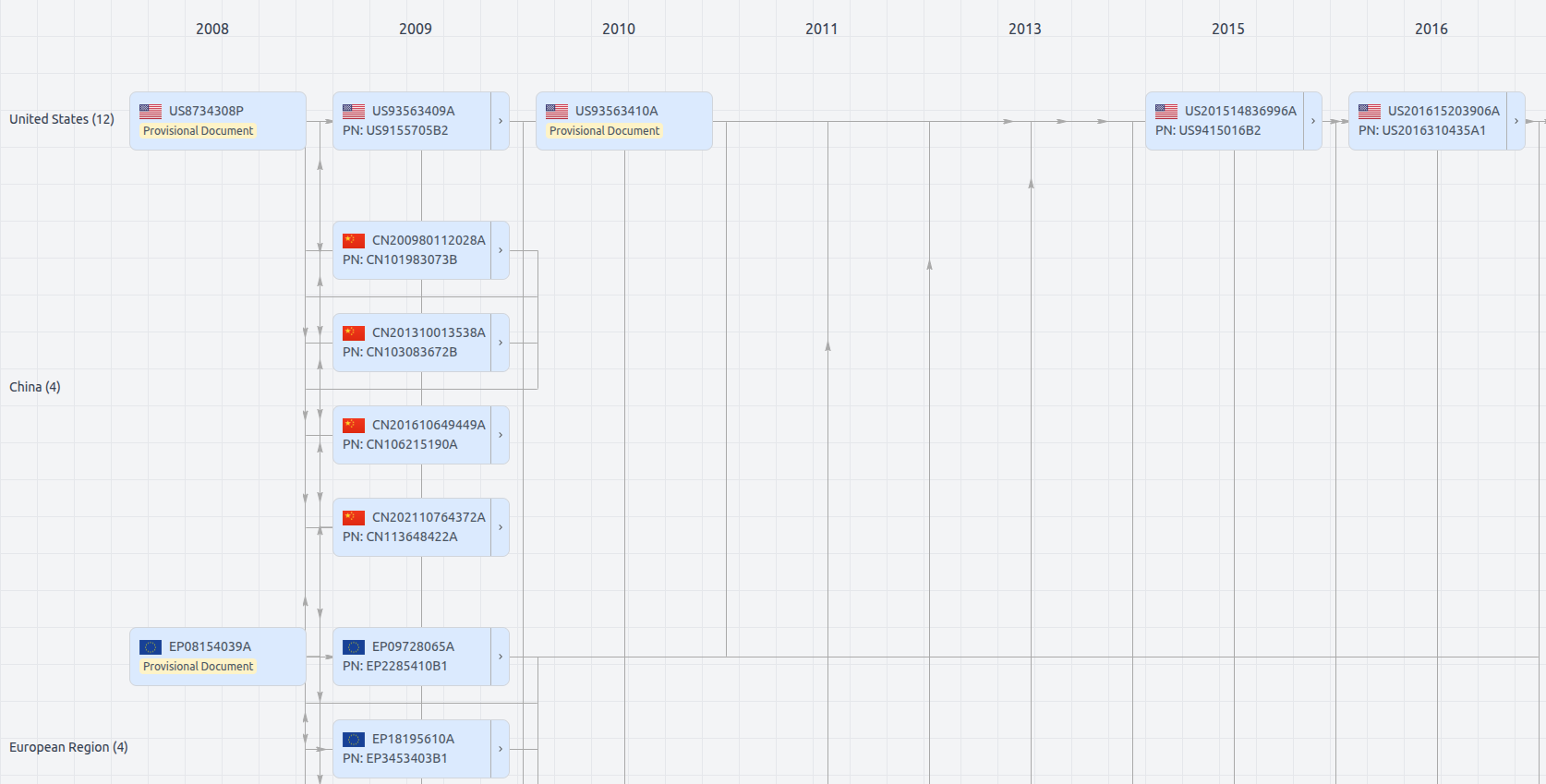

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US11126853

- Application Number

- US16271773

- Filing Date

- Feb 8, 2019

- Status

- Granted

- Expiry Date

- Sep 23, 2036

- External Links

- Slate, USPTO, Google Patents