Predicting Three-Dimensional Features For Autonomous Driving

Patent No. US11150664 (titled "Predicting Three-Dimensional Features For Autonomous Driving") was filed by Tesla Inc on Feb 1, 2019.

What is this patent about?

’664 is related to the field of autonomous vehicle control systems, specifically those employing machine learning for environmental perception. The background involves the challenge of creating high-quality training data for deep learning models used in self-driving applications. Traditional methods rely heavily on manual annotation, which is time-consuming, expensive, and prone to inaccuracies, especially when dealing with complex or occluded features in the environment.

The underlying idea behind ’664 is to leverage a time series of sensor data to create more accurate training data for machine learning models. Instead of relying on a single snapshot, the system analyzes a sequence of images and sensor readings to build a more complete and accurate representation of the environment. This is achieved by combining information from multiple viewpoints and time steps to overcome limitations such as occlusions or poor visibility in individual frames.

The claims of ’664 focus on a system that uses a processor to receive image data from a vehicle's camera and determine a three-dimensional trajectory of a machine learning feature (e.g., a lane line). This determination is based on inputting the image data into a trained machine learning model. The model is trained using training data that includes a ground truth (the actual 3D trajectory) derived from a plurality of time series elements (images captured over time) and a selected time series element.

In practice, the system captures a video sequence as the vehicle moves. For each frame in the sequence, the system also records odometry data (vehicle speed, steering angle, etc.). By analyzing the entire sequence, the system can build a more accurate 3D representation of features like lane lines, even if they are partially obscured in some frames. The system then uses this 3D representation as the 'ground truth' to train a machine learning model to predict the 3D trajectory of lane lines from a single image.

This approach differs from prior solutions that rely on manual annotation or single-frame analysis. By using a time series of data, the system can automatically generate training data with higher accuracy and completeness. This leads to machine learning models that are better at perceiving the environment and making decisions for autonomous driving. The use of a three-dimensional trajectory also allows for more precise vehicle control, especially in challenging conditions such as curves or hills.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’664 was filed, autonomous vehicle systems commonly relied on sensor data fusion and machine learning for perception and control at a time when training data curation was typically implemented using manual annotation and labeling processes, and when hardware or software constraints made the generation of high-quality, accurately labeled training datasets for complex scenarios non-trivial.

Novelty and Inventive Step

The claims were rejected in a non-final office action. The examiner issued rejections under 35 U.S.C. 103 and 112. The prosecution record does NOT describe the technical reasoning or specific claim changes that led to allowance.

Claims

This patent contains 19 claims, with claims 1, 18, and 19 being independent. The independent claims are directed to a system, a computer program product, and a method for determining a three-dimensional trajectory of a machine learning feature or vehicle lane line using a machine learning model to automatically control a vehicle. The dependent claims generally elaborate on and refine the elements and features of the independent claims.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

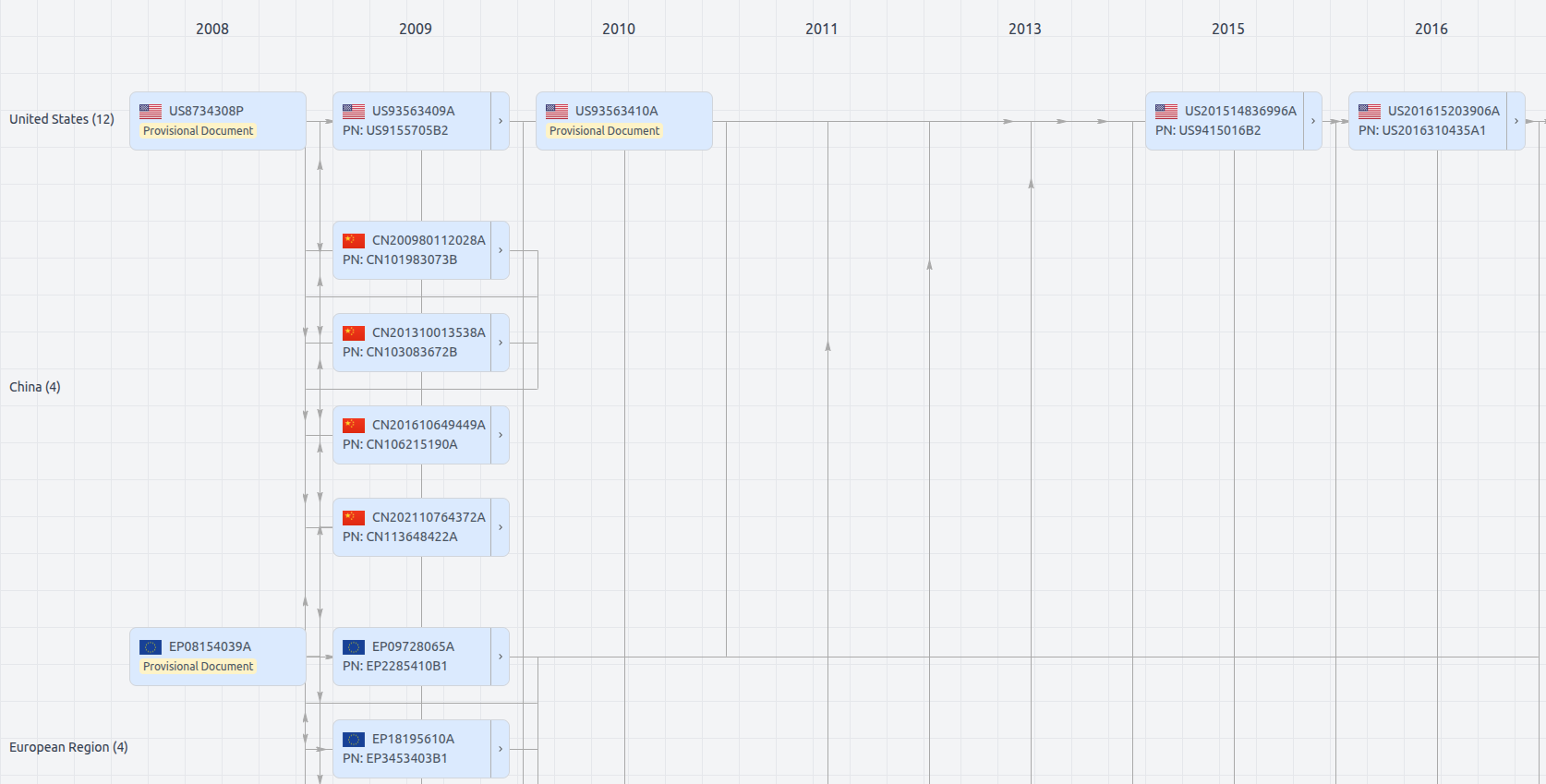

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US11150664

- Application Number

- US16265720

- Filing Date

- Feb 1, 2019

- Status

- Granted

- Expiry Date

- Jan 2, 2040

- External Links

- Slate, USPTO, Google Patents