Computational Array Microprocessor System Using Non-Consecutive Data Formatting

Patent No. US11157441 (titled "Computational Array Microprocessor System Using Non-Consecutive Data Formatting") was filed by Tesla Inc on Mar 13, 2018.

What is this patent about?

’441 is related to the field of microprocessors and, more specifically, to systems that accelerate machine learning tasks. Modern machine learning, especially deep learning, relies heavily on matrix and vector computations. A key bottleneck in these computations is the latency associated with fetching data from memory, particularly when dealing with non-contiguous data elements required for operations like strided convolutions.

The underlying idea behind ’441 is to improve the throughput of a microprocessor system by using a hardware data formatter to efficiently gather data for a computational array. Instead of loading individual data elements from memory, the data formatter groups elements into subsets of consecutive memory locations. This allows for more efficient memory access, as a single memory request can load an entire subset. The hardware data formatter then provides these subsets to the computational array for parallel processing.

The claims of ’441 focus on a microprocessor system comprising a computational array and a hardware data formatter. The hardware data formatter gathers a group of values for the computational array based on a data formatting operation that identifies at least a stride . The hardware data formatter includes multiple read buffers, each storing a subset of consecutive values from memory. The number of values from each subset to be used for processing is determined by the stride, and the computational array disables computation units corresponding to the unused values.

In practice, the hardware data formatter calculates memory addresses for subsets of data, checks if these subsets are cached, and loads them into read buffers. When a stride is used, some elements within a loaded subset might not be needed for the computation. The hardware data formatter still loads these elements but the computational array disables the corresponding computation units, effectively filtering out the unneeded data. This approach allows the system to handle strided operations efficiently without requiring complex memory access patterns for each individual element.

This design differentiates itself from prior approaches by optimizing data loading for parallel processing. Instead of requiring all input elements to be consecutive in memory (which limits flexibility) or treating each element as independent (which incurs high memory access overhead), ’441 strikes a balance. By loading subsets of consecutive elements and then selectively utilizing them based on the stride, the system minimizes memory access latency while still supporting non-contiguous data access patterns. The disabling of computation units further optimizes the process by preventing unnecessary calculations on unused data.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’441 was filed, machine learning and AI processing commonly relied on parallel processing architectures such as GPUs to accelerate mathematical operations on large datasets. At a time when memory access latency was a significant bottleneck, systems commonly relied on complex memory management schemes to optimize data loading for computational arrays. Hardware or software constraints made it non-trivial to efficiently load non-consecutive data elements from memory for parallel processing, especially in applications requiring high throughput and low latency.

Novelty and Inventive Step

The examiner allowed the claims because the prior art, whether considered alone or in combination, did not teach a hardware data formatter configured to gather a group of values based on a data formatting operation that identifies at least a stride. Furthermore, the prior art did not teach a hardware data formatter comprising a plurality of read buffers configured to store respective subsets of the values, where the number of values from each subset is determined based on the stride, and where the computational array disables particular computation units corresponding to the remaining values of each subset which are not utilized.

Claims

There are 23 claims in total. Claims 1, 20, and 21 are independent. The independent claims are generally directed to a microprocessor system and a method involving a computational array, a hardware data formatter, and memory access with a stride. The dependent claims generally elaborate on the features and functionalities of the independent claims, such as the arrangement and processing of data subsets, cache operations, and specific applications of the system.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

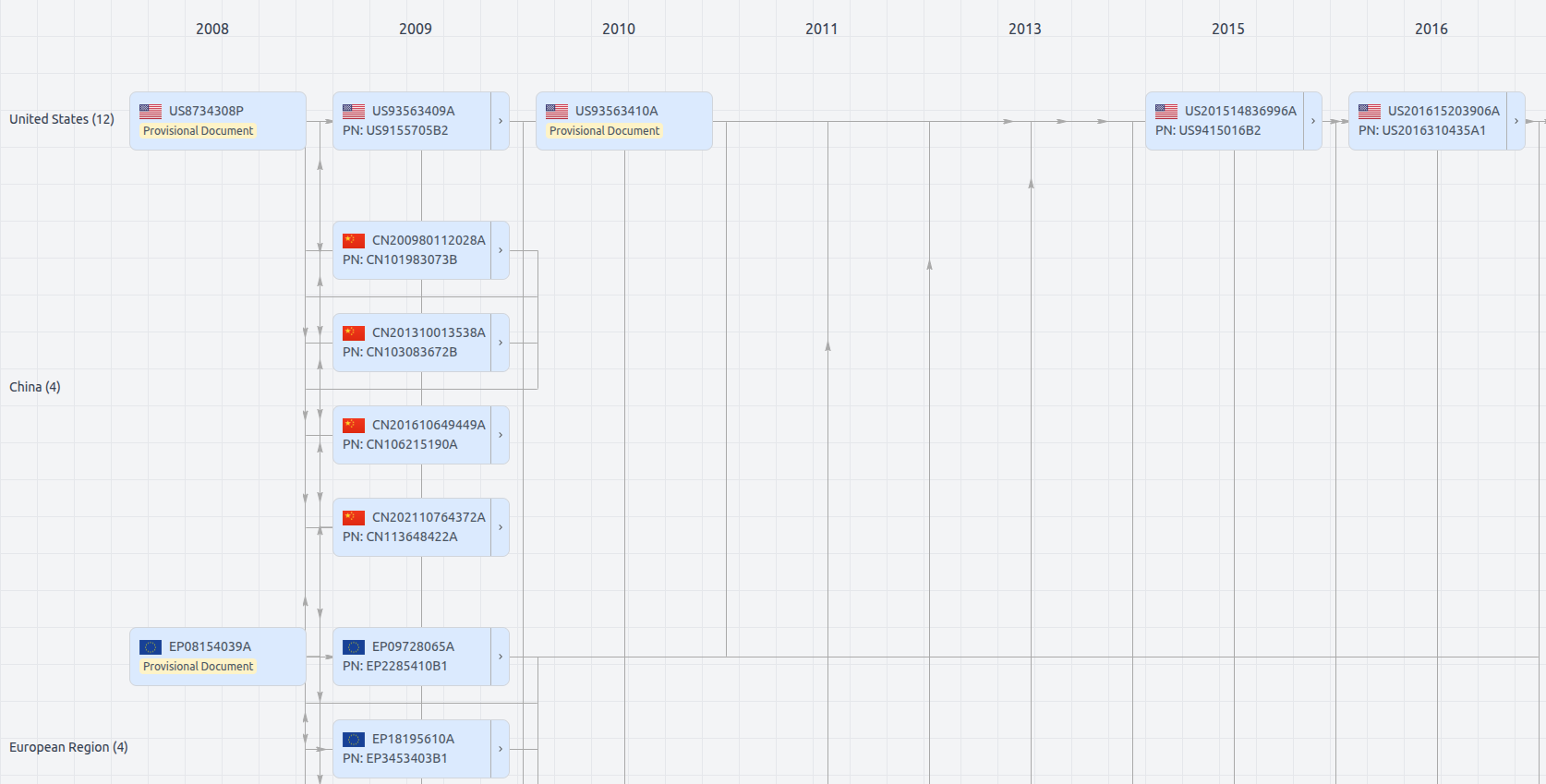

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US11157441

- Application Number

- US15920173

- Filing Date

- Mar 13, 2018

- Status

- Granted

- Expiry Date

- May 27, 2038

- External Links

- Slate, USPTO, Google Patents