Real Time Assessment Of Picture Quality

Patent No. US11252325 (titled "Real Time Assessment Of Picture Quality") was filed by Snapaid Ltd on Mar 2, 2021.

What is this patent about?

’325 is related to the field of image processing , specifically to systems and methods for assessing the quality of captured images in real-time on devices like smartphones. The background acknowledges that even advanced camera systems often require user intervention to judge and enhance image quality, leading to users taking multiple shots to ensure a good result. The patent aims to automate and improve this process by leveraging sensor data and intelligent analysis.

The underlying idea behind ’325 is to combine multiple quality indicators derived from various sensors (camera, accelerometer, gyroscope) and image analysis techniques to provide a comprehensive and dynamic assessment of image quality. This involves not only evaluating individual aspects like focus, exposure, and motion blur but also considering the reliability (confidence level) of each indicator and their interdependencies. The system then uses this information to guide the user towards capturing a better image or automatically selecting the best image from a stream.

The claims of ’325 focus on a method performed by a processor within a device containing a camera module and motion/location sensors. The method involves obtaining values related to device motion, image exposure, face properties, and lens obstruction. The key aspect is the use of a deep learning algorithm to calculate at least one of the values or weights associated with these indicators. Based on these values and weights, the algorithm selects and presents appropriate suggestions to the user on how to improve the image.

In practice, the system continuously analyzes the incoming image stream and sensor data, computing quality indicators for various aspects of the image. For example, accelerometer data is used to assess device shake, while image analysis algorithms detect blur and identify faces. The confidence level assigned to each indicator reflects its reliability, taking into account sensor errors and algorithm assumptions. These indicators are then combined, with weights adjusted based on their relevance and confidence, to produce a total quality score.

This approach differentiates itself from prior solutions by dynamically adjusting the weights of quality indicators based on the context and reliability of other indicators. For instance, if the device shake indicator is unreliable, the system might reduce the weight of aesthetic quality indicators. Furthermore, the system uses the combined quality assessment to provide real-time feedback and suggestions to the user, guiding them to adjust camera settings or reposition the device to capture a better image. The system can also automatically select the best image from a burst or video stream based on the total quality score.

How does this patent fit in bigger picture?

Technical landscape at the time

In the early 2010s when ’325 was filed, cameras, even in their “auto mode”, still required users to assess image quality, often leading to multiple shots to ensure a good one. At a time when image enhancement was typically implemented using software like Photoshop, hardware or software constraints made real-time image quality assessment and assistance non-trivial.

Novelty and Inventive Step

The examiner approved the application because the prior art does not teach obtaining a first value responsive to device motion, estimating a first weight associated with the first value, obtaining a second value representing under or over exposure, estimating a second weight associated with the second value, analyzing the captured image for face detection and properties, obtaining a third value, estimating a third weight, obtaining a fourth value responsive to lens obstruction, and estimating a fourth weight. At least one of the values or weights are calculated using a neural network and deep learning to select a suggestion from a table to help the user improve the image.

Claims

This patent contains 20 claims, with independent claims 1 and 11 directed to methods for estimating image quality using motion sensors, image analysis, and weighting factors within a device containing a camera. The dependent claims generally elaborate on specific aspects, calculations, and refinements of the methods described in the independent claims.

Key Claim Terms New

Definitions of key terms used in the patent claims.

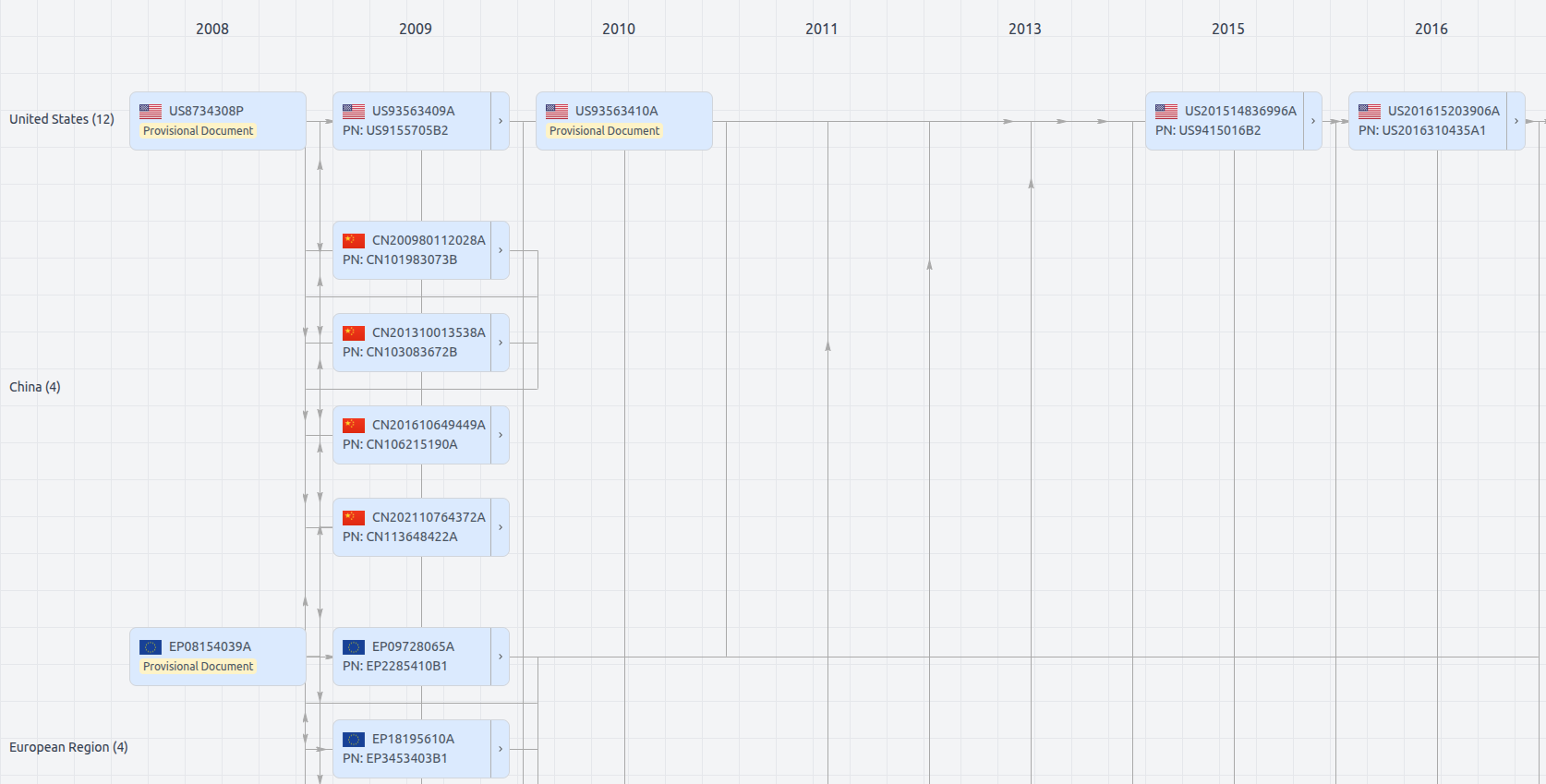

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US11252325

- Application Number

- US17189587

- Filing Date

- Mar 2, 2021

- Status

- Granted

- Expiry Date

- Oct 22, 2033

- External Links

- Slate, USPTO, Google Patents