Vector Computational Unit

Patent No. US11409692 (titled "Vector Computational Unit") was filed by Tesla Inc on Mar 13, 2018.

What is this patent about?

’692 is related to the field of microprocessor design, specifically concerning architectures optimized for machine learning and artificial intelligence workloads. Traditional CPUs and GPUs, while capable, are not ideally suited for the repetitive, parallel mathematical operations common in these applications. The patent addresses the need for a specialized microprocessor system that can efficiently handle large datasets and specific processing operations required by machine learning algorithms, such as convolutional neural networks .

The underlying idea behind ’692 is to create a microprocessor architecture that combines a computational array with a vector computational unit to accelerate machine learning tasks. The computational array, acting as a matrix processor, performs parallel arithmetic operations on input vectors. The key inventive insight is to then feed the output of this array into a vector computational unit, which further processes the data in parallel using a plurality of processing elements. This pipelined architecture allows for efficient execution of complex mathematical operations on large datasets.

The claims of ’692 focus on a microprocessor system comprising a computational array and a vector computational unit. The computational array consists of computation units arranged in lanes, each forming a FIFO queue . These queues receive data elements in parallel from a vector input module and shift them to the vector computational unit. The vector computational unit, in turn, processes these data elements in parallel to generate a processing result. The claims emphasize the parallel processing capabilities and the communication between the computational array and the vector computational unit.

In practice, the computational array can be implemented as a matrix processor with computation units performing multiply-accumulate operations, effectively calculating dot products required for convolution. The vector computational unit then applies activation functions or other non-linear transformations to the output of the matrix processor. The use of FIFO queues in the computational array ensures a smooth flow of data to the vector computational unit, preventing bottlenecks and maximizing throughput. This architecture is particularly well-suited for processing image data in convolutional neural networks.

This design differentiates itself from prior approaches by providing a dedicated hardware architecture optimized for machine learning operations. Unlike general-purpose CPUs or GPUs that rely on multiple processing cores and complex instruction sets, ’692 utilizes a specialized computational array and vector computational unit to perform parallel processing with minimal overhead. The instruction set architecture is streamlined for vector operations, and the data flow is optimized for efficient execution of machine learning algorithms. This results in improved performance and energy efficiency compared to traditional processing units.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’692 was filed, at a time when machine learning and artificial intelligence algorithms were increasingly deployed, systems commonly relied on parallel processing architectures to accelerate computations. Hardware or software constraints made efficient data transfer and synchronization between processing units non-trivial.

Novelty and Inventive Step

The examiner approved the application because the prior art, specifically Phelps (US 2018/0336164), did not teach a computational array with computation units grouped into FIFO lanes, where a subset of these units receives data from a vector input module in parallel. Furthermore, Phelps did not disclose the specific parallel connection between the last row of computation units and processing elements, nor the shifting of data through FIFO queues formed by columns of computation units.

Claims

This patent includes 26 claims, with independent claims 1, 23, and 25. Independent claims focus on a microprocessor system, a vector computational unit within such a system, and a method of processing data using the vector computational unit, respectively. The dependent claims generally elaborate on and refine the features and functionalities described in the independent claims, providing more specific details about the system's components and their interactions.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

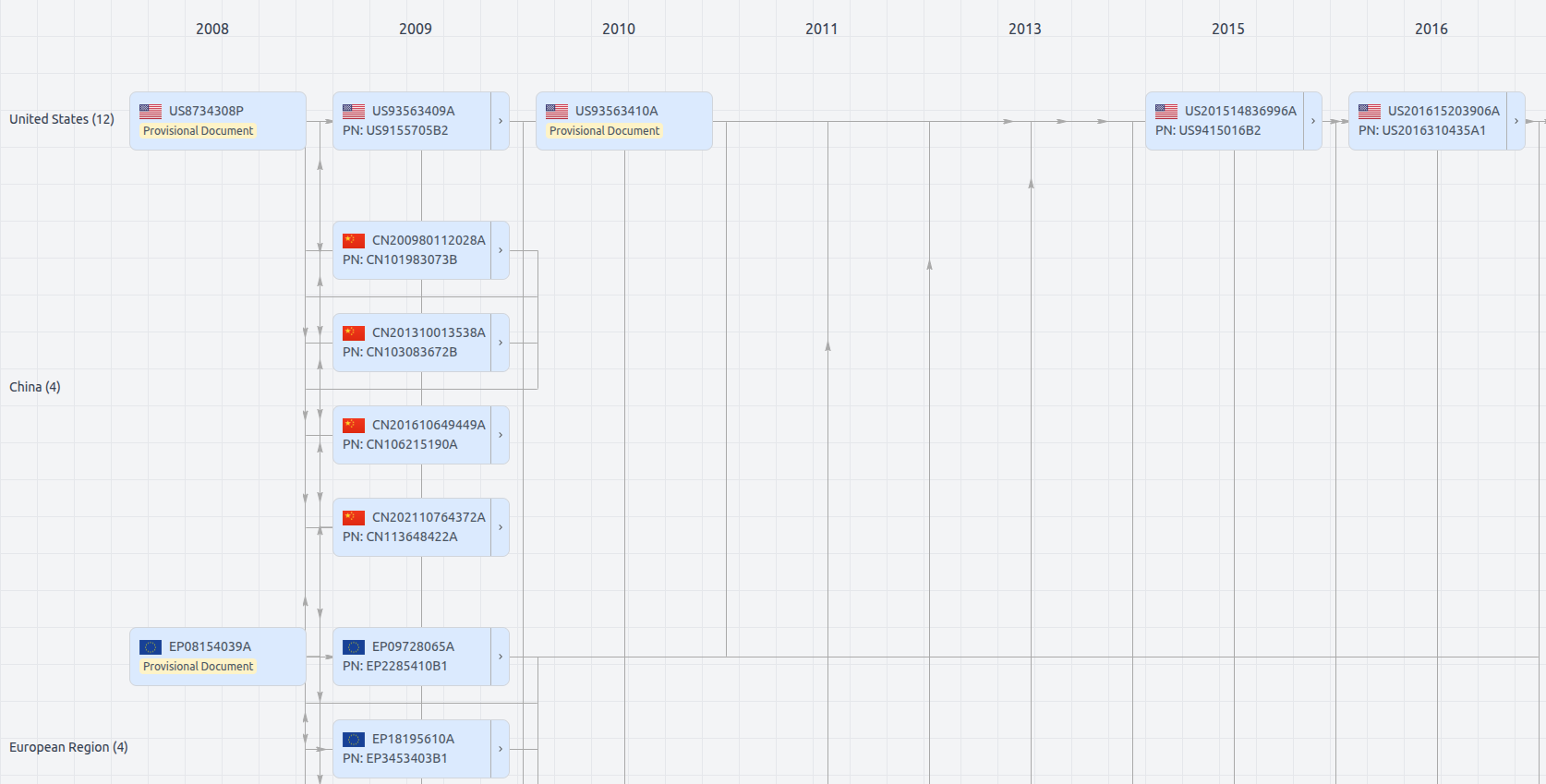

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US11409692

- Application Number

- US15920156

- Filing Date

- Mar 13, 2018

- Status

- Granted

- Expiry Date

- Sep 20, 2037

- External Links

- Slate, USPTO, Google Patents