Vector Computational Unit Receiving Data Elements In Parallel From A Last Row Of A Computational Array

Patent No. US11561791 (titled "Vector Computational Unit Receiving Data Elements In Parallel From A Last Row Of A Computational Array") was filed by Tesla Inc on Mar 13, 2018.

What is this patent about?

’791 is related to the field of microprocessor design, specifically focusing on accelerating machine learning and artificial intelligence workloads. Traditional CPUs and GPUs, while capable, are not optimized for the repetitive, parallel operations common in machine learning, leading to inefficiencies. The patent addresses the need for a specialized microprocessor system that can efficiently handle these operations on large datasets.

The underlying idea behind ’791 is to create a vector computational unit that can execute multiple instructions in parallel across a large vector of data. This is achieved by combining a computational array (matrix processor) with a vector engine, where the computational array performs initial calculations, and the vector engine applies further processing, such as activation functions. The key insight is to use a single processor instruction to trigger multiple component instructions within the vector computational unit, maximizing parallelism and minimizing overhead.

The claims of ’791 focus on a microprocessor system comprising a vector computational unit with multiple processing elements, each connected to a computation unit in a computational array. The computation units are arranged in lanes with FIFO queues. The control unit synchronizes data receipt and provides a single processor instruction specifying at least three different component instructions. These component instructions utilize different hardware resources within each processing element, such as the arithmetic logic unit (ALU) , and are executed with staggered starts across different processor instructions.

In practice, the system operates by feeding data through the FIFO queues of the computational array to the vector computational unit. The control unit then issues a single processor instruction that triggers a sequence of operations within each processing element of the vector computational unit. For example, a single instruction might initiate a load operation, an ALU operation (like ReLU), and a store operation, all executing in parallel on different data elements. This instruction bundling and parallel execution significantly speeds up processing.

This approach differs from traditional processors by avoiding the overhead of managing multiple processor cores for each parallel operation. Instead, the vector computational unit's architecture allows for efficient parallel processing of large datasets with minimal instruction overhead. The use of staggered starts for different component instructions further optimizes resource utilization, ensuring that the ALU and other hardware resources are continuously active, leading to improved performance in machine learning tasks.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’791 was filed, at a time when machine learning and artificial intelligence applications were gaining traction, systems commonly relied on parallel processing architectures to handle large datasets. At that time, GPUs were often used to accelerate matrix operations, but hardware or software constraints made it non-trivial to efficiently execute specific machine learning operations on large datasets in parallel without incurring significant overhead from managing multiple processing cores.

Novelty and Inventive Step

The examiner approved the application because the prior art of record, even when combined, did not teach a vector computational unit with specific features. These features include a plurality of processing elements, each connected to a corresponding computation unit in the last row of a computational array. The computation units are grouped into lanes, each forming a FIFO queue. A subset of these computation units receives data elements from a vector input module in parallel and shifts them through the FIFO queues, providing each data element in parallel from the last row of computation units to the corresponding processing elements. The closest prior art (Phelps) taught a computational array and a vector computational unit, but it did not disclose the specific parallel connection between the last row of computation units and processing elements, nor the shifting of data through FIFO queues formed by columns of computation units.

Claims

This patent contains 16 claims, with independent claims 1 and 16. Independent claim 1 is directed to a microprocessor system comprising a vector computational unit and a control unit circuit, while independent claim 16 is directed to a method involving receiving and decoding processor instructions and using a vector computational unit to execute component instructions. The dependent claims generally elaborate on and provide specific details and features of the microprocessor system and its operation, further defining the components and instructions used.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

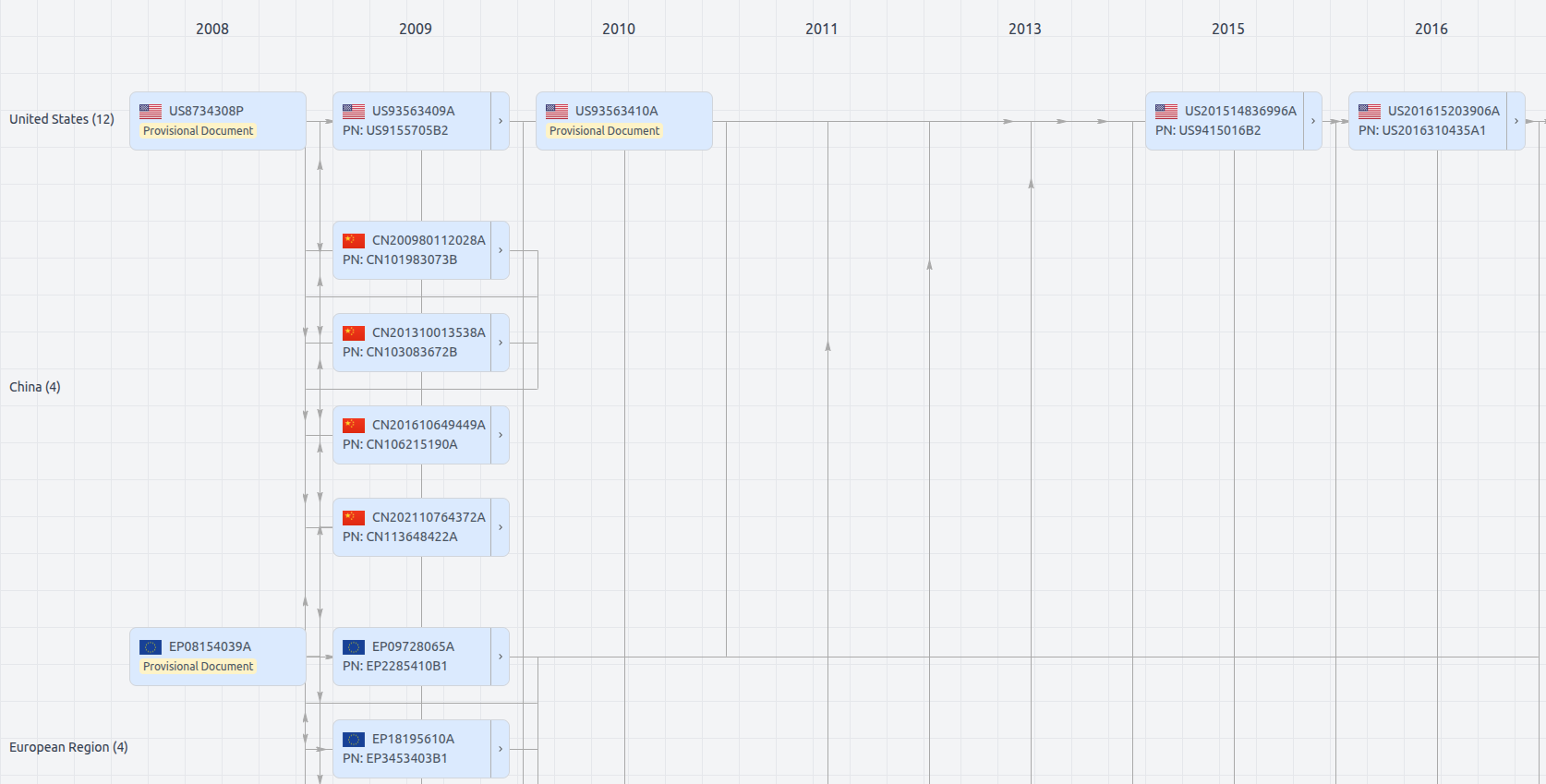

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Get instant alerts for new documents

US11561791

- Application Number

- US15920165

- Filing Date

- Mar 13, 2018

- Status

- Granted

- Expiry Date

- Mar 13, 2038

- External Links

- Slate, USPTO, Google Patents