Neural Networks For Embedded Devices

Patent No. US11562231 (titled "Neural Networks For Embedded Devices") was filed by Deepscale Inc on Sep 3, 2019.

What is this patent about?

’231 is related to the field of deep neural networks (DNNs), specifically concerning their deployment on resource-constrained devices like those found in Internet-of-Things (IoT) applications. The background involves the challenge of implementing complex neural networks, typically requiring high-precision floating-point arithmetic, on devices with limited processing power and memory, often restricted to lower-bit integer arithmetic (e.g., 8-bit). This necessitates architectural innovations to reduce computational load and prevent data overflow.

The underlying idea behind ’231 is to design a neural network architecture, named StarNet, that can operate efficiently on reduced-bit processors by carefully managing the size and quantization of filter weights and input activations. The key inventive insight is to constrain the dimensionality of filters and the bit-widths of activations and weights to prevent arithmetic overflow during calculations, while also incorporating a novel "star-conv" filter structure to reduce the number of elements per filter.

The claims of ’231 focus on a method, a non-transitory computer-readable medium, and a system for generating a neural network structure tailored for devices with limited bit-length registers. The core of the claims involves determining appropriate integer representations for input layers and filters, and then generating dimensionalities for these layers and filters such that the output of their combination does not exceed the register's bit-length. A key feature is the use of star-shaped filters , which only use non-diagonal elements of a 3x3 grid, reducing the number of computations.

In practice, the StarNet architecture achieves efficient computation by employing a combination of techniques. First, the number of elements in each filter is limited (e.g., to 32 elements). Second, linear quantization is applied to both filter weights and input activations, mapping floating-point values to lower-bit integer representations. The bit-widths are chosen such that the maximum possible output value of a convolution operation remains within the representable range of the 8-bit registers, preventing overflow. A "star-shuffle block," consisting of 1x1 convolutions, ReLU activations, star convolutions, and shuffle layers, is used as a recurring module.

The "star-conv" filter is a key differentiator from traditional convolutional layers. By using only the immediate top, bottom, left, and right neighbors of a pixel, instead of all nine pixels in a 3x3 grid, the number of elements per filter is reduced. This allows for more bits to be used to represent the weights and activations, improving accuracy. Furthermore, the "shuffle" layer interleaves the ordering of channels to enable communication across channels, addressing the reduction in representational power that can occur when using group convolutions with a group-length greater than 1.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’231 was filed, deep neural networks were typically implemented using floating-point arithmetic on complex processors. At a time when systems commonly relied on 32-bit operations, hardware or software constraints made implementing neural networks on low-cost, low-power devices with reduced-bit arithmetic and storage non-trivial.

Novelty and Inventive Step

The examiner approved the application primarily because the claims include specific details of a neural network structure. This includes determining a bit length of registers for arithmetic operations, determining ranges of integer values, generating dimensionalities of input layers by combining elements of an input layer with elements of a corresponding filter to prevent overflow, and using star-shaped filters with zero weight values in the corners of a 3x3 rectangle. These features, in combination with other recited elements, were not found in the prior art.

Claims

This patent contains 17 claims, with independent claims 1, 7, and 13. The independent claims are directed to a method, a computer-readable medium, and a system for generating a neural network structure by determining dimensionalities of input layers and filters based on register bit length to avoid overflow. The dependent claims generally elaborate on the quantization process, the inclusion of a shuffle layer, and the bit length of the registers.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

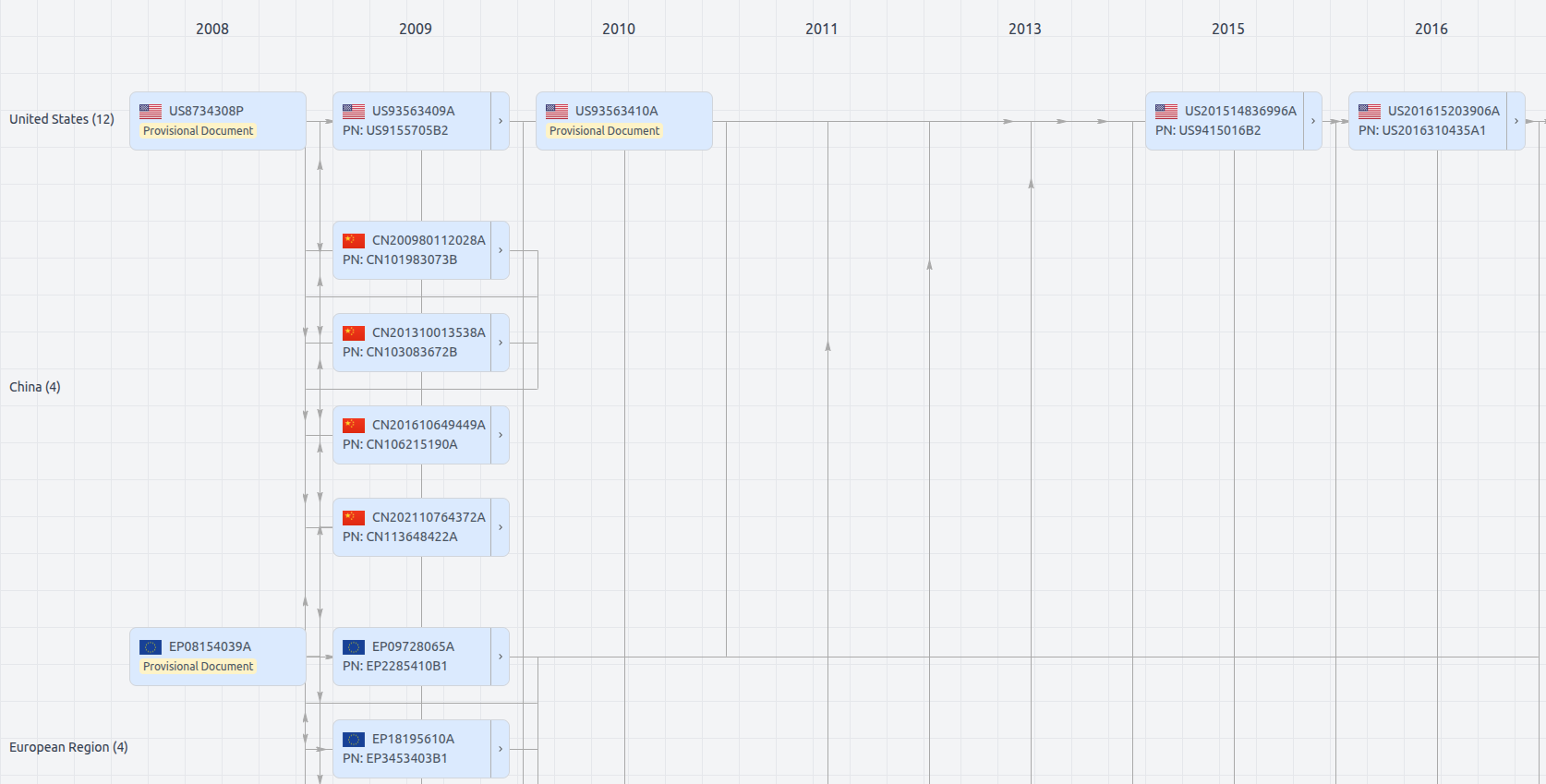

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US11562231

- Application Number

- US16559483

- Filing Date

- Sep 3, 2019

- Status

- Granted

- Expiry Date

- May 19, 2041

- External Links

- Slate, USPTO, Google Patents