Annotation Cross-Labeling For Autonomous Control Systems

Patent No. US11841434 (titled "Annotation Cross-Labeling For Autonomous Control Systems") was filed by Tesla Inc on Jun 10, 2022.

What is this patent about?

’434 is related to the field of autonomous vehicle systems and, more specifically, to the training of computer models used in such systems. Autonomous vehicles rely on sensors like cameras and LIDAR to perceive their environment. Training the computer models that interpret this sensor data requires accurately labeled data, a process that can be time-consuming and computationally expensive, especially for 3D data like LIDAR point clouds.

The underlying idea behind ’434 is to leverage readily available and easily annotated 2D image data to assist in the annotation of more complex 3D sensor data. The system uses the 2D annotation of an object in an image, along with the camera's viewpoint, to project a spatial region into the 3D space of the LIDAR data. This region then serves as a focused search area for annotating the corresponding object in the 3D point cloud.

The claims of ’434 focus on a method, system, and storage medium for annotating sensor measurements. Specifically, the claims cover obtaining a 2D image with an object annotated, obtaining 3D sensor measurements of the same scene, determining a spatial region in the 3D space corresponding to the 2D annotation and the camera's viewpoint, and then annotating the 3D sensor measurements within that spatial region to locate the object in 3D.

In practice, the system would first identify an object in a 2D camera image and create a bounding box around it. Knowing the camera's position and orientation, the system then projects a viewing frustum from the camera's perspective into the 3D LIDAR point cloud. This frustum defines a limited volume in the 3D space where the corresponding object is likely to be found. An annotation model is then applied only to the data within this frustum, significantly reducing the computational burden and improving annotation accuracy.

This approach differs from prior methods that either rely on manual 3D annotation, which is labor-intensive, or apply annotation models to the entire 3D point cloud, which is computationally expensive and prone to errors due to the sparsity of LIDAR data. By using the 2D image annotation as a guide, ’434 effectively narrows down the search space in the 3D data, leading to more efficient and accurate 3D annotation for training autonomous vehicle systems.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’434 was filed, autonomous systems commonly relied on sensor fusion techniques to perceive their environment, at a time when LIDAR and camera data were typically processed separately before being combined. Annotating 3D point clouds from LIDAR sensors was non-trivial, when manual annotation was still a common practice, and when computational resources made processing large datasets a significant bottleneck.

Novelty and Inventive Step

The examiner approved the application because the prior art, whether considered individually or in combination, did not disclose or suggest the specific claim elements related to determining a spatial region in 3D space based on the viewpoint of an image sensor relative to a first annotation, and then using this spatial region to identify a second annotation within a subset of the 3D space. The examiner found that the applicant's arguments regarding the limitations of the closest prior art were convincing.

Claims

This patent contains 20 claims, with independent claims 1, 10, and 19. The independent claims are directed to a method, a non-transitory computer-readable storage medium, and a system, respectively, all generally focused on annotating sensor measurements based on an image of a real-world scene. The dependent claims generally elaborate on and refine the elements and steps recited in the independent claims.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

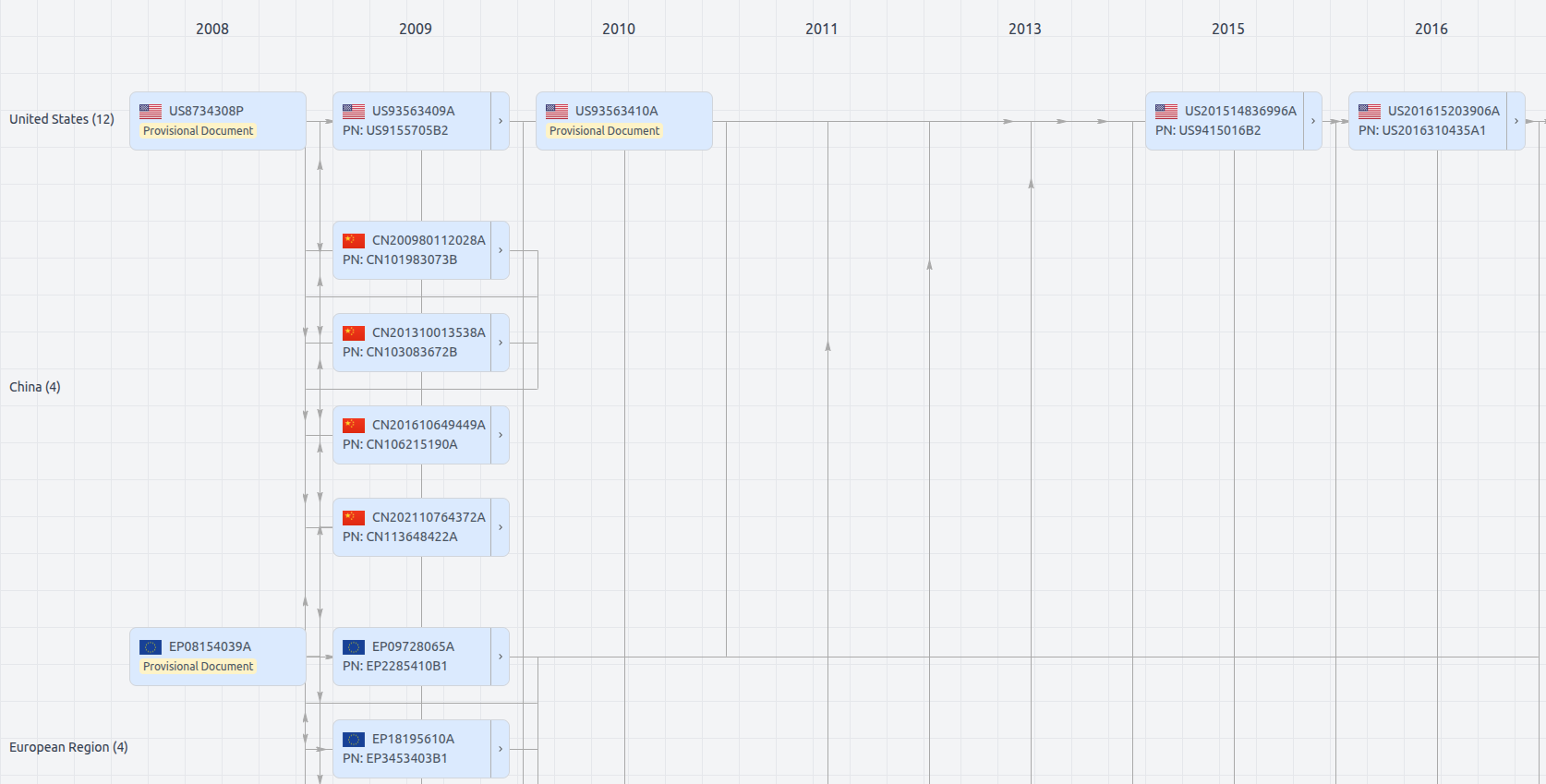

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US11841434

- Application Number

- US17806358

- Filing Date

- Jun 10, 2022

- Status

- Granted

- Expiry Date

- Aug 21, 2039

- External Links

- Slate, USPTO, Google Patents