Gpu Chiplets Using High Bandwidth Crosslinks

Patent No. US11841803 (titled "Gpu Chiplets Using High Bandwidth Crosslinks") was filed by Onesta Ip Llc on Jun 28, 2019.

What is this patent about?

’803 is related to the field of multi-chip module (MCM) design, specifically addressing the challenge of integrating increasingly complex graphics processing units (GPUs) into smaller spaces. Traditional monolithic GPU designs are becoming expensive to manufacture, and while chiplets have been used in CPUs, applying them to GPUs is difficult due to the need for synchronous ordering and memory coherence across parallel workloads.

The underlying idea behind ’803 is to use a passive interposer to connect multiple GPU chiplets, creating a unified GPU that appears as a single device to the CPU and applications. This allows for a larger, more powerful GPU without requiring changes to existing software or programming models. The key is maintaining cache coherence across the chiplets, particularly at the last-level cache (LLC).

The claims of ’803 focus on a system comprising a CPU and a GPU chiplet array. The array includes a first GPU chiplet connected to the CPU via a bus, and a second GPU chiplet connected to the first via a passive crosslink . This crosslink is specifically for inter-chiplet communication. The method claims cover receiving a memory access request at a first GPU chiplet, determining which chiplet caches the data, routing the request via the passive crosslink to the caching chiplet's last-level cache, and returning the data to the CPU.

In practice, the CPU sends memory requests to a designated 'master' GPU chiplet. This chiplet then uses the passive crosslink to communicate with other 'slave' chiplets to locate the requested data in their respective L3 caches. The passive crosslink acts as a high-bandwidth interconnect, allowing the master chiplet to access the L3 caches of other chiplets with low latency. This ensures that the entire GPU chiplet array appears as a single, coherent memory space to the CPU.

This approach differs from prior solutions by using a passive interposer without through-silicon vias (TSVs) for inter-chiplet communication. The passive crosslink simplifies manufacturing and reduces cost compared to active interposers. By maintaining cache coherence at the L3 level, the system avoids the need for complex inter-chiplet coherency protocols, allowing existing GPU programming models to be used without modification. The dedicated communication channels between chiplets further optimize data transfer and reduce latency.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’803 was filed, GPUs were commonly implemented as monolithic dies, at a time when increasing die sizes were driving up manufacturing costs. At this time, systems commonly relied on cache coherent memory architectures, and hardware or software constraints made it non-trivial to extend cache coherence across multiple physical dies.

Novelty and Inventive Step

The examiner approved the application because the claims include a GPU chiplet array with a first GPU chiplet connected to the CPU via a bus, and a second GPU chiplet connected to the first via a passive crosslink dedicated for inter-chiplet communication. The examiner determined that the prior art did not anticipate or render obvious this specific combination.

Claims

This patent contains 20 claims, with independent claims 1, 11, and 16. Independent claim 1 is directed to a system comprising a CPU communicably coupled to a GPU chiplet array. Independent claims 11 and 16 are directed to a method and a non-transitory computer readable medium, respectively, both relating to routing memory access requests within a GPU chiplet array. The dependent claims generally elaborate on and refine the features and functionalities described in the independent claims.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

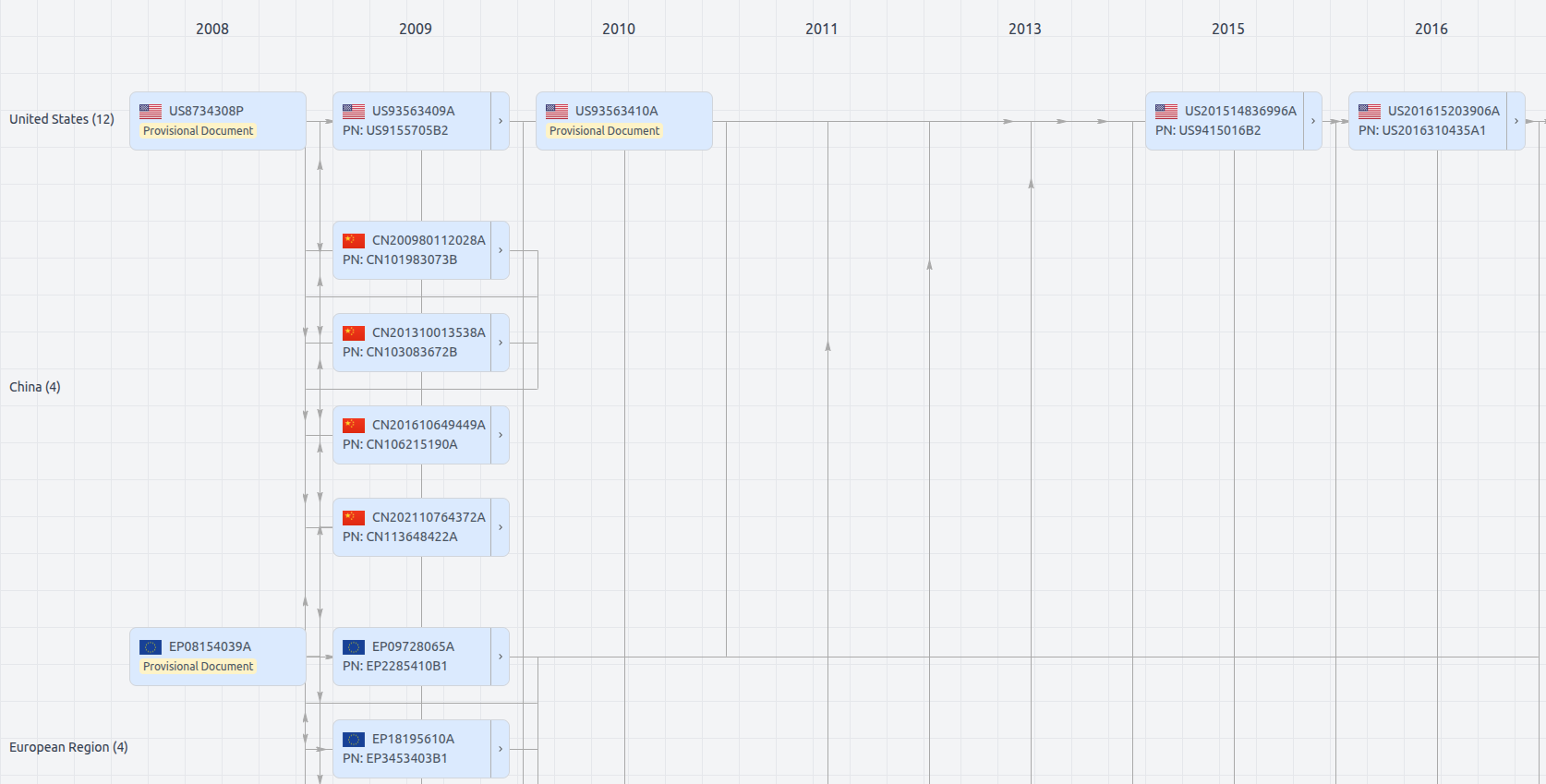

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Get instant alerts for new documents

US11841803

- Application Number

- US16456287

- Filing Date

- Jun 28, 2019

- Status

- Granted

- Expiry Date

- Jan 6, 2040

- External Links

- Slate, USPTO, Google Patents