Systems And Methods For Generating Of 3D Information On A User Display From Processing Of Sensor Data For Objects, Components Or Features Of Interest In A Scene And User Navigation Thereon

Patent No. US11935288 (titled "Systems And Methods For Generating Of 3D Information On A User Display From Processing Of Sensor Data For Objects, Components Or Features Of Interest In A Scene And User Navigation Thereon") was filed by Pointivo Inc on Jan 3, 2022.

What is this patent about?

’288 is related to the field of remote inspection using sensor data, specifically addressing the challenge of visualizing and interpreting sensor data acquired for objects of interest in a scene. Traditional methods often involve either in-person inspections, which can be dangerous or limited, or remote viewing of previously captured data, which can suffer from data gaps and a lack of real-time context. The patent aims to bridge the gap between these approaches by providing an improved methodology for visualizing sensor data on a user's display.

The underlying idea behind ’288 is to create a synchronized multi-viewport display that allows a user to navigate a 3D representation of a scene while simultaneously viewing other relevant data types, such as 2D images, that are dynamically updated based on the user's viewpoint. This is achieved by registering different data types to a common coordinate system and then processing the data to generate an object-centric visualization that provides contextually relevant information based on the user's interaction with the scene.

The claims of ’288 focus on a method comprising providing a stored data collection associated with an object of interest, generating an object information display in a single user viewport, navigating a scene camera to generate a user-selected positioning relative to a 3D representation of the object of interest, and updating the object information display in real time as the scene camera is being navigated. The updated object information display includes an object-centric visualization of the 3D representation of the object of interest derived from the user's positioning of the scene camera relative to the 3D representation as appearing in the single user viewport, and is provided with a concurrent display of at least one additional data type.

In practice, the invention allows a user to virtually explore a scene, such as a cellular tower or a commercial roof, and obtain detailed information about specific objects or features. For example, a user could navigate a 3D point cloud of a cellular tower and, as they focus on a particular antenna, the system would automatically display high-resolution 2D images of that antenna from various angles. This allows the user to inspect the antenna for damage or other issues without having to physically climb the tower.

’288 differentiates itself from prior approaches by synchronizing different data types and providing an object-centric visualization that is dynamically updated based on the user's viewpoint. This allows the user to seamlessly integrate different types of information and generate contextually relevant insights. Furthermore, the system can infer user intent based on their navigation and positioning of the scene camera, and use this information to further refine the visualization and provide more relevant data.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’288 was filed, image capture and processing technologies were rapidly advancing, at a time when remotely operated vehicles such as drones were increasingly used for tasks like object inspection. Systems commonly relied on RGB images and other sensor data to generate 3D information, but hardware or software constraints made it non-trivial to provide users with contextually relevant information derived from this data in real-time, especially when separating data capture from data analysis.

Novelty and Inventive Step

The examiner allowed the claims because previously cited references do not disclose the claims as amended. The application presents object-centric exploration techniques for handheld augmented reality that allow users to access information freely using a virtual copy metaphor.

Claims

There are 11 claims in total, with claim 1 being the only independent claim. Independent claim 1 is directed to a method of remotely inspecting a real-life object using previously acquired object-related data. The dependent claims elaborate on the method, specifying details and variations of the data types, display configurations, user navigation, and information derived from the inspection.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

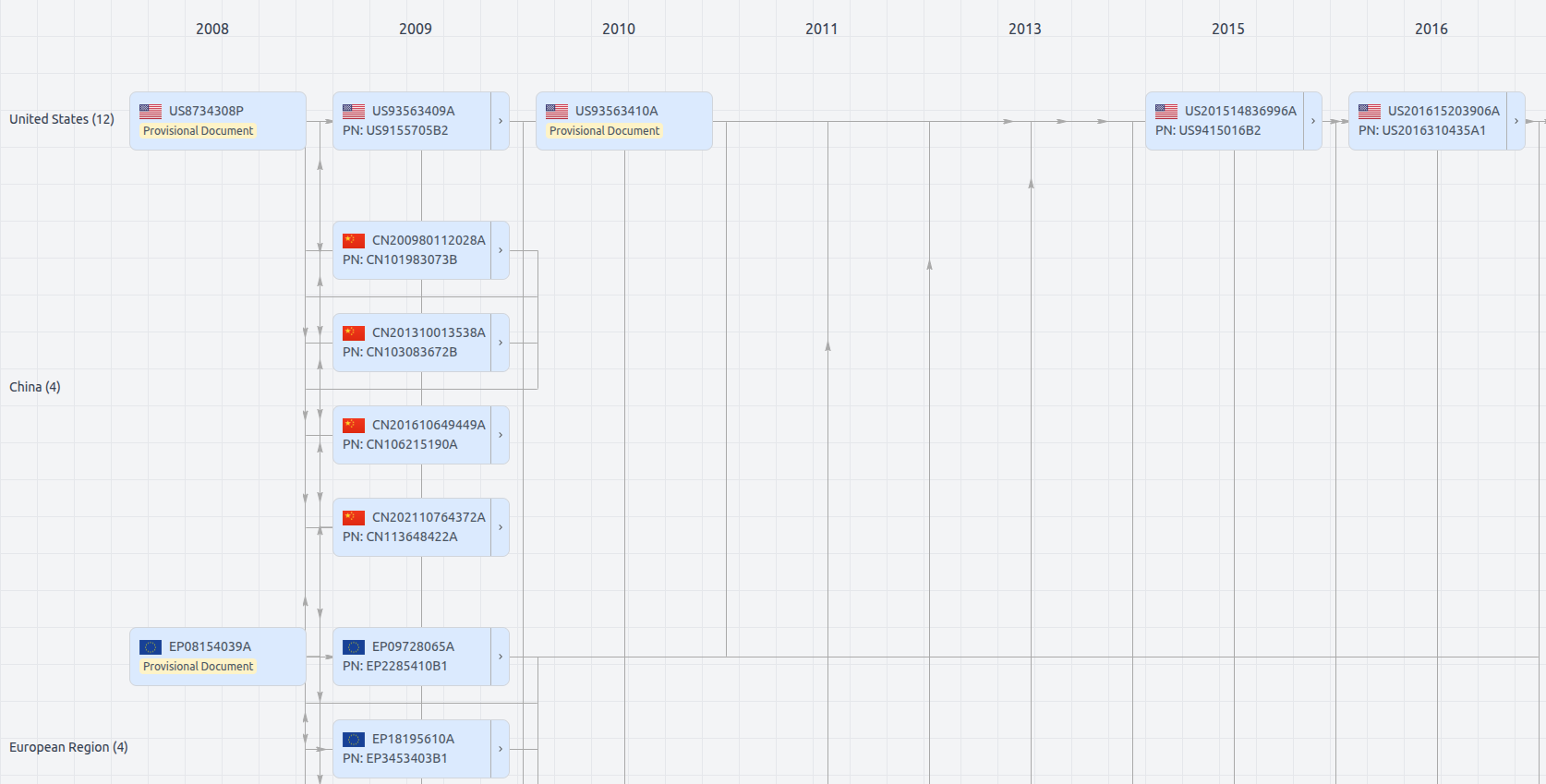

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Get instant alerts for new documents

US11935288

- Application Number

- US17567347

- Filing Date

- Jan 3, 2022

- Status

- Granted

- Expiry Date

- Dec 1, 2040

- External Links

- Slate, USPTO, Google Patents