Real-Time Accent Conversion Model

Patent No. US11948550 (titled "Real-Time Accent Conversion Model") was filed by Canadian Imperial Bank Of Commerce on Aug 27, 2021.

What is this patent about?

’550 is related to the field of speech processing, specifically accent conversion. The background involves challenges in communication due to different accents, even among speakers of the same language. Existing solutions, such as voice conversion methods that adjust audio characteristics or speech-to-text-to-speech (STT-TTS) approaches, have limitations in capturing pronunciation nuances and maintaining real-time performance.

The underlying idea behind ’550 is to use a machine-learning pipeline to convert speech from one accent to another in real-time. This involves first deriving a linguistic representation of the input speech using an automatic speech recognition (ASR) engine, and then synthesizing audio data with the target accent using a voice conversion (VC) engine. The key is to operate on a non-text linguistic representation to preserve nuances and minimize latency.

The claims of ’550 focus on a system, a non-transitory computer-readable medium, and a method for real-time accent conversion. The core process involves training a first machine-learning algorithm with audio data from multiple speakers of a first accent, applying this algorithm to received speech to derive a non-text linguistic representation, synthesizing audio data with a second accent using a second machine-learning algorithm, and converting the synthesized audio data into a synthesized version of the received speech with the second accent. A key aspect is mapping phonemes from one accent to another at the linguistic representation level.

In practice, the system uses an ASR engine trained on multiple speakers of the input accent to create a generalized linguistic representation. This representation is then fed into a VC engine, which has been trained to map the input accent's linguistic features to those of the target accent. The VC engine synthesizes audio data, which is then converted into speech by a vocoder. This approach allows for real-time conversion because it avoids the latency associated with converting speech to text and back.

The differentiation from prior approaches lies in the use of a non-text linguistic representation as an intermediate step. Traditional methods either adjust audio characteristics directly or rely on STT-TTS, both of which have drawbacks. By operating on a linguistic level, the invention can capture subtle pronunciation differences and maintain low latency, making it suitable for real-time communication applications. The system also trains the ASR engine with multiple speakers to create a more robust and generalized representation of the input accent.

How does this patent fit in bigger picture?

Technical landscape at the time

In the early 2020s when ’550 was filed, machine learning models were increasingly used for speech processing tasks, at a time when speech-to-text and text-to-speech systems commonly relied on large datasets and significant computational resources. Accent conversion, while recognized as a desirable feature, was typically implemented using simpler voice conversion techniques that adjusted audio characteristics, when hardware or software constraints made real-time, nuanced accent transformation non-trivial.

Novelty and Inventive Step

The examiner approved the application because the prior art cited (Dirac, Hwang, and Peng) did not teach the limitations of the claims. Specifically, the examiner found that the prior art did not disclose the training and application of the machine learning model, nor the synthesizing of fourth audio data using the method described in the claims. Therefore, the examiner concluded that the prior art, either alone or in combination, did not teach the combination of limitations found in the claims.

Claims

This patent contains 22 claims, with independent claims 1, 11, and 19. The independent claims are directed to a system, a computer-readable medium, and a method, respectively, all generally focused on converting speech content from a first accent to a second accent using machine learning. The dependent claims generally elaborate on and refine the features and functionalities described in the independent claims, such as specific mappings, data characteristics, user inputs, and real-time processing aspects.

Key Claim Terms New

Definitions of key terms used in the patent claims.

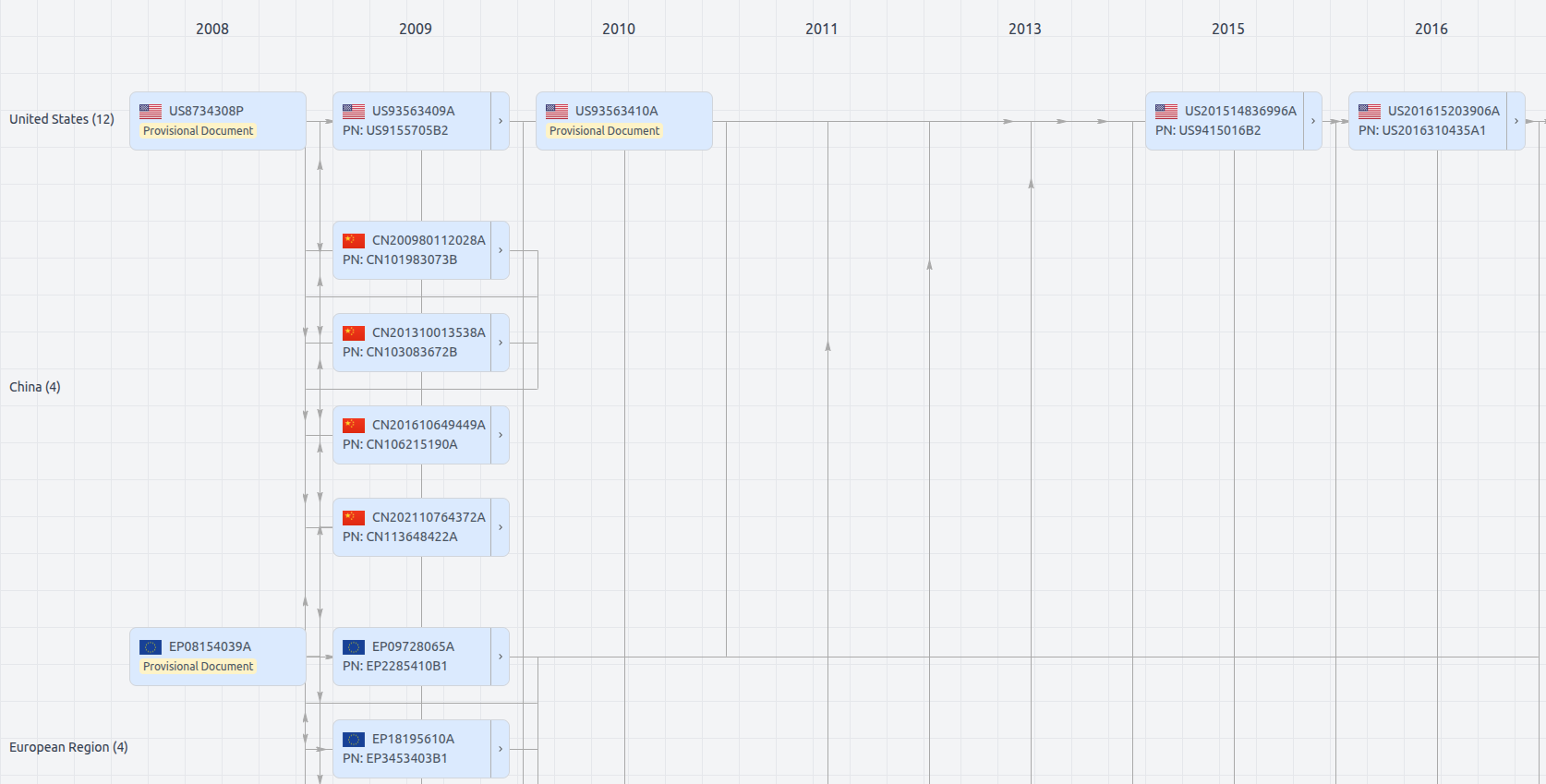

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US11948550

- Application Number

- US17460145

- Filing Date

- Aug 27, 2021

- Status

- Granted

- Expiry Date

- Aug 27, 2041

- External Links

- Slate, USPTO, Google Patents