Rapid predictive analysis of very large data sets using the distributed computational graph

Patent No. US12143425 (titled "Rapid predictive analysis of very large data sets using the distributed computational graph") on Jul 21, 2024. The application was issued on Nov 12, 2024.

What is this patent about?

'425 is related to the field of predictive analysis using distributed computational graphs. The background involves the increasing volume of data and the need for systems that can analyze streaming data in conjunction with vast amounts of stored data to make meaningful conclusions and enable effective action. Existing data pipelines are limited in their capabilities and are often linear, which restricts their use in complex situations requiring branching or recurrent modification.

The underlying idea behind '425 is to create a system that intelligently combines the processing of a current data stream with the ability to retrieve relevant stored data, enabling predictive conclusions or actions. This involves a distributed computational graph where data transformations are represented as nodes, and the flow of data between transformations is represented as edges. The system also monitors its own operations and intermediate factors to optimize function and maximize the probability of reliable conclusions.

The claims of '425 focus on a distributed computing network comprising first, second, and third pluralities of computer systems. The first computer system executes software instructions to receive a stream of data, process portions of it using a first transformation pipeline, and transmit the output. A second computer system processes the output using a second transformation pipeline. A third computer system stores data representing a portion of the distributed computational graph, describing the data flow between the pipelines. The third computer system monitors the execution of the pipelines and, in response, causes a fourth computer system to execute instructions that perform processing using either the first or second transformation pipeline.

In practice, the system receives streaming data from various sources, filters it, and splits it into two pathways: a streaming pathway and a batch pathway. The streaming pathway uses a transformation pipeline to perform real-time analysis, while the batch pathway stores the data for later analysis. A system sanity and retrain module monitors the progress of the analysis and adjusts the parameters of the system to optimize performance. The results of the analysis are then output in a pre-defined format.

This system differentiates itself from prior approaches by using a distributed computational graph that allows for non-linear transformation pipelines. This enables the system to handle more complex situations and to adapt to changing data streams. The system also includes a self-assessment mechanism that monitors its own operations and makes adjustments to optimize performance, which is a significant improvement over rigidly programmed data pipelines.

How does this patent fit in bigger picture?

Technical Landscape

In the mid-2010s when ’425 was filed, large-scale data processing was typically implemented using distributed storage and batch-processing frameworks that relied on rigid, linear data pipelines. At a time when systems commonly relied on manual intervention to address pipeline failures or performance bottlenecks, the integration of real-time streaming analytics with historical batch data was often fragmented into separate, non-communicating architectures. Furthermore, software constraints made the dynamic self-modification of computational graphs non-trivial, as most environments required static definitions of data flows that could not easily adapt to intermediate results or operational instability without restarting the analysis campaign.

Prosecution Position

The examiner allowed the application because the prior art did not teach the specific use of a third computer system that stores a distributed computational graph to manage the interaction between two distinct transformation pipelines. Specifically, the examiner noted that while existing systems could process data through sequential pipelines, they lacked the claimed mechanism where a third system monitors the execution of the first and second pipelines and, based on that monitoring, triggers a fourth distinct computer system to perform additional processing on the data streams. The allowance was based on this multi-system coordination where the monitoring of pipeline execution directly controls the allocation of tasks to a separate fourth computing resource.

Claims

This patent contains 6 claims, with claim 1 being the only independent claim. Independent claim 1 is directed to a system comprising a distributed computing network that processes data streams using transformation pipelines and monitors their execution. The dependent claims generally elaborate on the monitoring and processing aspects of the system described in the independent claim.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

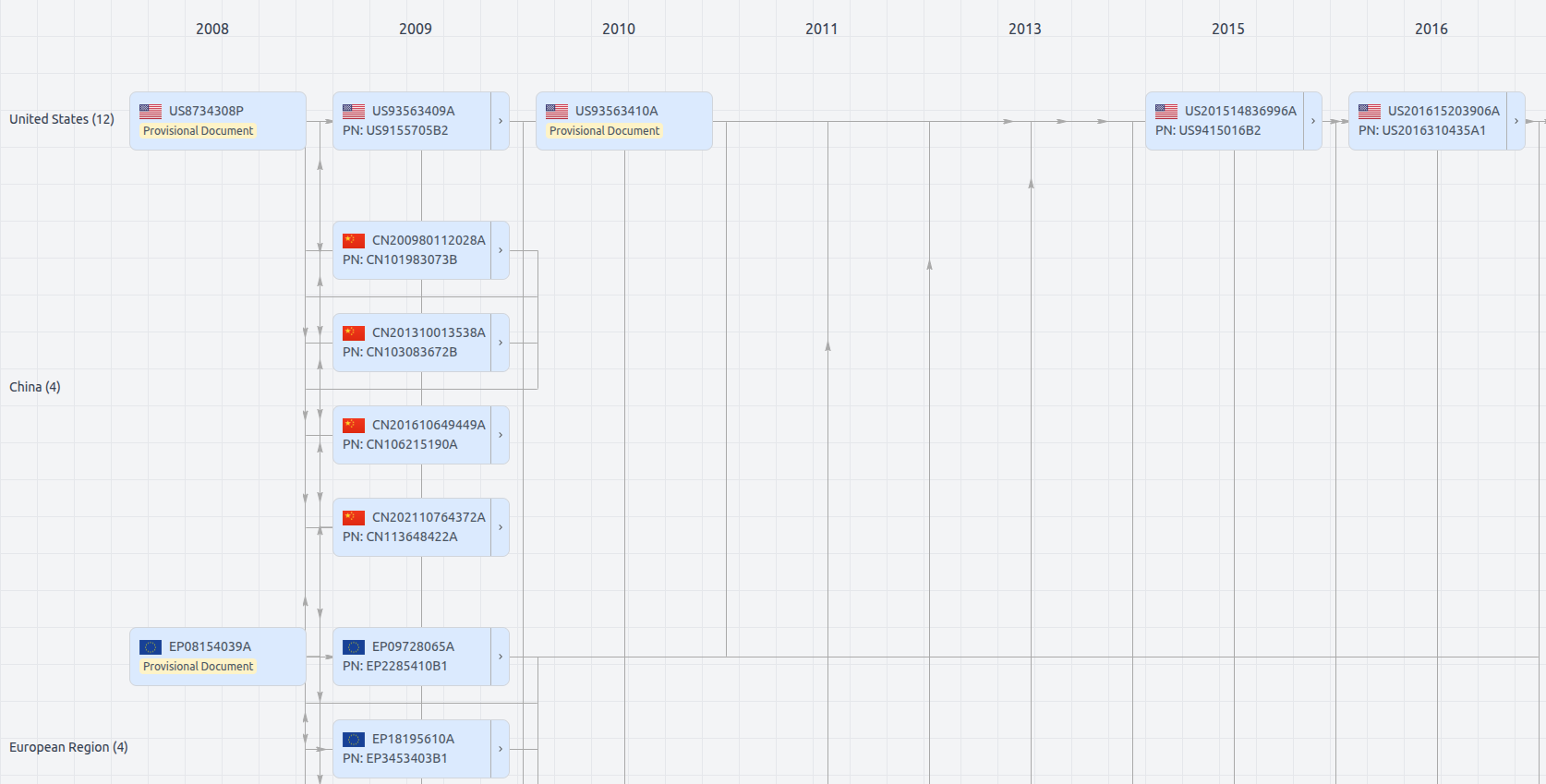

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Get instant alerts for new documents

US12143425

- Application Number

- US18779035A

- Filing Date

- Jul 21, 2024

- Publication Date

- Nov 12, 2024

- External Links

- Slate, USPTO, Google Patents