Systems And Methods For Hardware-Based Pooling

Patent No. US12307350 (titled "Systems And Methods For Hardware-Based Pooling") was filed by Tesla Inc on Jan 4, 2018.

What is this patent about?

’350 is related to the field of convolutional neural networks (CNNs) , specifically focusing on improving the efficiency of pooling layers. CNNs are used for image classification and object recognition, employing multiple layers to extract features from input images. Pooling layers are essential for down-sampling feature maps, reducing computational load, and increasing performance. However, traditional pooling methods can be computationally intensive and limit overall CNN efficiency.

The underlying idea behind ’350 is to implement a hardware-based pooling architecture that directly processes the output of a convolution engine. Instead of storing the convolution output in memory and then performing pooling, the invention reformats the data on-the-fly into a grid-like structure. This allows for efficient application of pooling operations, such as max-pooling or average-pooling, without the need for complex intermediate steps or extensive data storage.

The claims of ’350 focus on a pooling unit that reformats input data into multiple rows to create a pooling array. The input data is a linearized array representing an output channel from a convolutional layer. The rows are shifted relative to each other over several arithmetic cycles to align data for pooling. The pooling unit then applies pooling operations to these aligned rows to generate a pooling output, outputting a pooling value every number of arithmetic cycles corresponding to a stride value.

In practice, the pooling unit receives a linearized array from a matrix processor, which represents a feature map. The row aligner then arranges this data into rows, effectively creating a grid where neighboring values are aligned vertically and horizontally. This alignment allows the pooler to easily extract values for pooling calculations. The stride value determines how often pooling data is output, controlling the sliding window's movement across the feature map.

This approach differs from prior solutions by avoiding intermediate memory storage of the convolution result. By directly processing the output channel from the matrix processor, the pooling unit reduces computation time and improves overall efficiency. The on-the-fly reformatting and pooling operations enable faster processing of CNN layers, leading to improved performance in image classification and object recognition tasks. The hardware-based implementation allows for parallel processing and optimized data flow, further enhancing efficiency compared to software-based pooling methods.

How does this patent fit in bigger picture?

Technical landscape at the time

In the late 2010s when ’350 was filed, at a time when convolutional neural networks were increasingly used for image classification and object recognition, systems commonly relied on techniques like weight sharing to improve the performance of convolutional layers, but pooling layers were often neglected due to architectural constraints. Hardware or software constraints made efficient hardware implementations of pooling operations non-trivial.

Novelty and Inventive Step

The examiner approved the application because prior art references, taken individually or together, did not disclose the specific steps for hardware-based pooling. This includes reformatting data into rows and shifting those rows based on a stride defined by a number of arithmetic cycles, which dictates how often the pooling data is output. These features, combined with the other elements of the claimed invention, distinguish it from the prior art.

Claims

This patent contains 18 claims, of which claims 1, 11, and 16 are independent. The independent claims are directed to a pooling unit and methods for using a hardware-based pooling system and a pooling unit, focusing on reformatting input data and applying pooling operations. The dependent claims generally elaborate on the specifics and features of the pooling unit and methods described in the independent claims.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

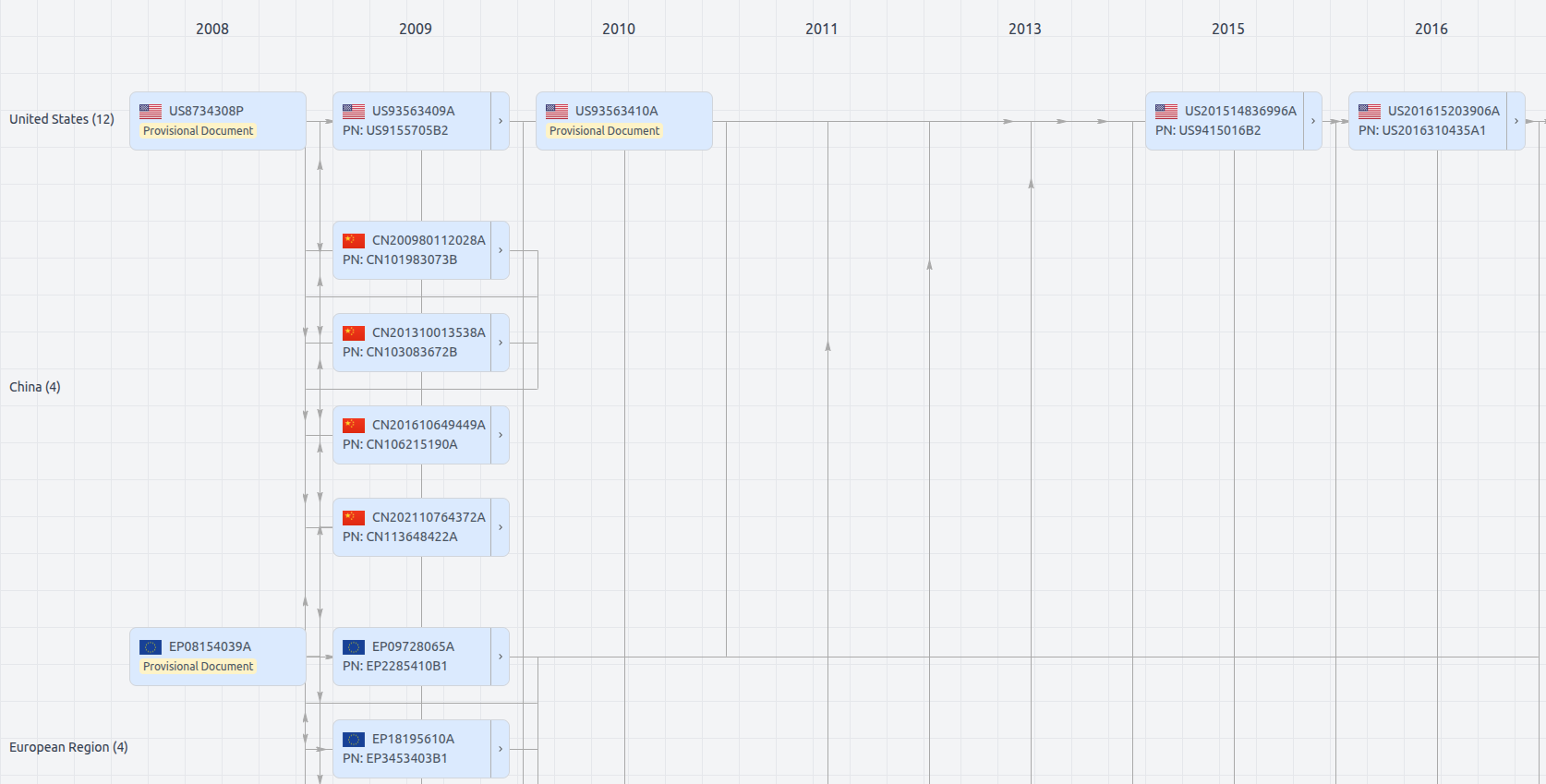

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Get instant alerts for new documents

US12307350

- Application Number

- US15862369

- Filing Date

- Jan 4, 2018

- Status

- Granted

- Expiry Date

- May 15, 2041

- External Links

- Slate, USPTO, Google Patents