Interactive Virtual Reality Broadcast Systems And Methods

Patent No. US12322014 (titled "Interactive Virtual Reality Broadcast Systems And Methods") was filed by Tphoenixsmr Llc on Oct 28, 2024.

What is this patent about?

’014 is related to the field of interactive virtual reality (VR) broadcast systems. The patent addresses the problem of replicating the experience of live musical performances during situations, such as pandemics, where social distancing is required. Traditional broadcast systems lack the interactive elements that make live performances engaging, such as audience feedback and the ability for performers to connect with the audience's energy.

The underlying idea behind ’014 is to create a virtual environment that mimics a live performance, capturing and transmitting both the performance itself and the audience's reactions in real-time. This is achieved by using sensors to track performers' movements and expressions, generating 3D VR avatars that mirror these actions, and then synchronizing these avatars with audio data. Simultaneously, audience feedback is captured and fed back to the performers, creating a closed-loop interactive experience.

The claims of ’014 focus on a method comprising receiving audio and visual performance data, generating a VR broadcast, receiving audience feedback data from multiple audience devices, synchronizing the audience feedback data with the VR broadcast in real time, transmitting the VR broadcast with the synchronized audience feedback data to the audience devices, and outputting the VR broadcast with the synchronized audience feedback data to the audience members. The claims also cover transmitting performer feedback data to the audience devices, creating a two-way communication channel .

In practice, the system uses a VR system controller to manage the entire process. Performers are equipped with facial expression sensors (e.g., cameras) and body movement sensors (e.g., accelerometers or LIDAR) to capture their actions. Microphones capture the audio. This data is then used to animate 3D VR avatars in a virtual environment. Audience members use VR headsets or other devices to view the performance and provide feedback through cameras and microphones on their devices. This feedback is then displayed to the performers, for example, on AR glasses, allowing them to react to the audience in real-time.

’014 differentiates itself from prior approaches by creating a truly interactive VR experience. Instead of simply broadcasting a performance, it incorporates real-time audience feedback and allows performers to respond, mimicking the dynamic interaction of a live show. The use of 3D VR avatars allows performers to appear closer together in the virtual environment than they are in reality, overcoming the limitations of social distancing. Furthermore, the system allows audience members to select different viewpoints and zoom in on specific performers, enhancing the viewing experience.

How does this patent fit in bigger picture?

Technical landscape at the time

In the early 2020s when ’014 was filed, virtual reality and augmented reality systems were becoming more prevalent, at a time when capturing and processing real-time motion data from multiple sources and rendering it into virtual environments was typically implemented using specialized hardware and software. Systems commonly relied on sensor fusion techniques to combine data from various sensors, such as cameras, accelerometers, and gyroscopes, to create a coherent representation of the physical world within the virtual environment. Furthermore, transmitting high-bandwidth, low-latency video and audio streams to multiple remote users while maintaining synchronization was a non-trivial engineering challenge.

Novelty and Inventive Step

Claims were amended and arguments were made during prosecution. Some claims were rejected as anticipated by prior art. Other claims were objected to as being dependent upon a rejected base claim. Ultimately, some claims were allowed. The prosecution record describes the examiner's reasoning for allowance, indicating that the allowed claims included limitations not present in the cited prior art.

Claims

This patent contains 18 claims, of which claims 1, 3, 7, 11, and 15 are independent. The independent claims generally focus on methods for virtual reality broadcasts of performances, including capturing and incorporating audience feedback. The dependent claims generally add specific details and features to the methods described in the independent claims.

Key Claim Terms New

Definitions of key terms used in the patent claims.

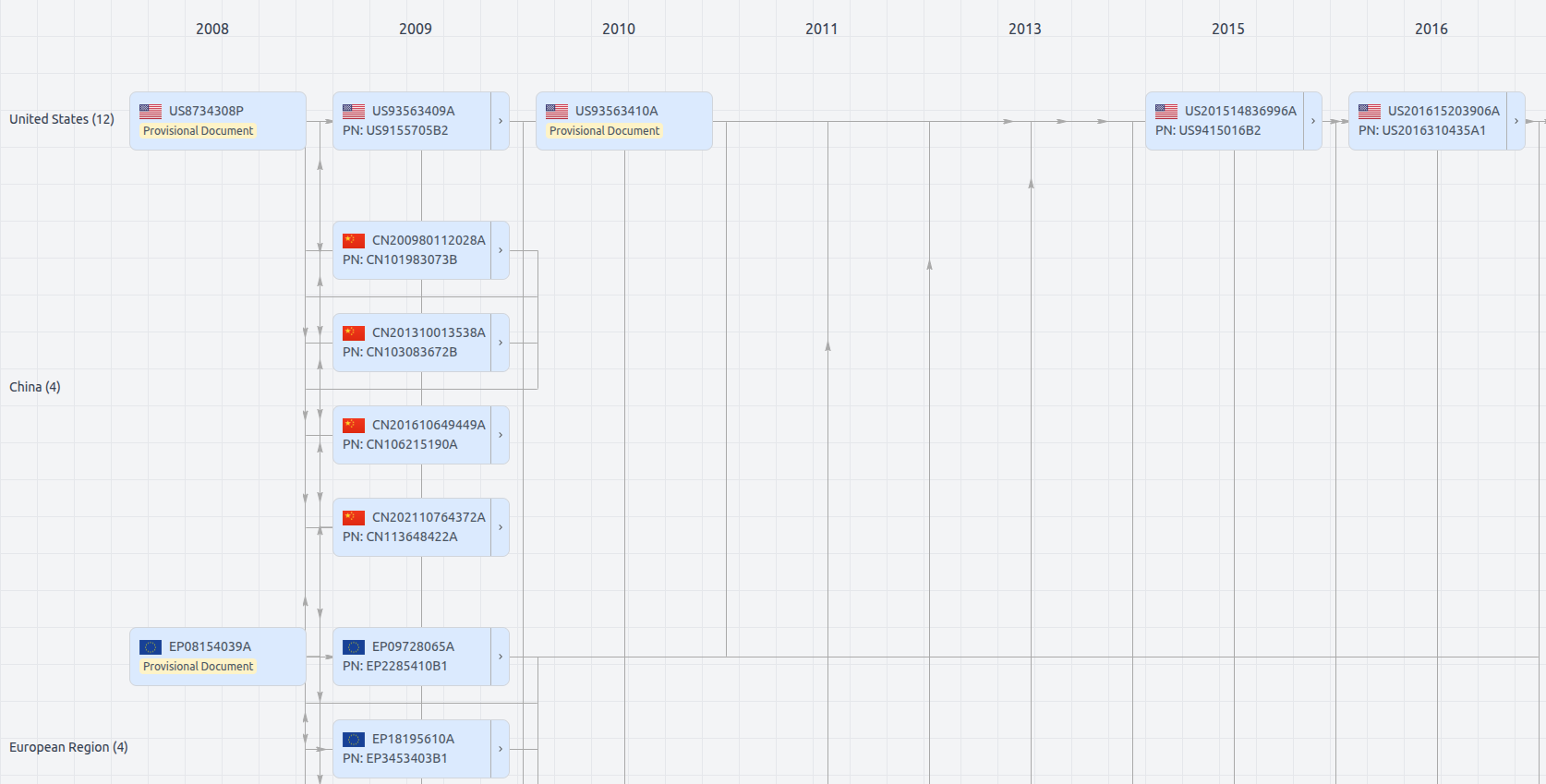

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Date

Description

Get instant alerts for new documents

US12322014

- Application Number

- US18928242

- Filing Date

- Oct 28, 2024

- Status

- Granted

- Expiry Date

- Oct 19, 2040

- External Links

- Slate, USPTO, Google Patents