Photogrammetric methods and devices related thereto

Patent No. US9886774 (titled "Photogrammetric methods and devices related thereto") on Aug 26, 2016. The application was issued on Feb 6, 2018.

What is this patent about?

'774 is related to the field of photogrammetry, specifically improving the accuracy and ease of use of 3D digital representation generation. Traditional photogrammetry often requires specialized equipment like structured light emitters or multiple cameras, or cumbersome calibration processes. Existing passive methods using single cameras can suffer from insufficient parallax or require user guidance that is prone to error.

The underlying idea behind '774 is to generate accurate 3D digital representations of objects using only a single, passive image-capture device, such as a smartphone camera. This is achieved by processing a series of overlapping 2D images using a structure from motion (SfM) algorithm to extract 3D geometry. The key insight is that sufficient detail can be obtained from these overlapping images to resolve object parameters and derive accurate measurements without active sensing or complex calibration.

The claims of '774 focus on a computerized method for obtaining measurements of an object. This involves receiving a plurality of 2D images (digital images or video frames) of a scene from a single passive image-capture device. The images must include the object of interest and have overlapping portions. The method then generates a 3D point cloud of the object and extracts spatial distances between point pairs to derive primitive geometry information (edges, lines, surfaces). Finally, this information is converted into 3D coordinates.

In practice, the invention leverages the sequential nature of video to improve the quality of 3D reconstruction. By tracking points within and even outside the image boundaries, the system can better correlate features across frames. The method also parameterizes lines with two endpoints, creating a duality between points and lines that facilitates modeling of lens distortion, even with uncalibrated cameras. This allows the system to filter out noise, reduce data processing needs, and provide object boundary detection.

The invention differentiates itself from prior approaches by using a single passive camera and avoiding cumbersome calibration steps. Unlike methods that rely solely on point clouds, this approach can utilize points, edges, and lines simultaneously, creating point clouds, line clouds, and edge clouds. Furthermore, the system can extract 3D measurements directly from the cloud data, potentially eliminating the need for a separate scaling step. This enables accurate measurements of objects, even at a distance, with accuracies down to a fraction of an inch, depending on the camera and distance.

How does this patent fit in bigger picture?

Technical Landscape

In the mid-2010s when ’774 was filed, photogrammetric measurements were typically implemented using active-sensing hardware, such as laser scanners or structured light peripherals, to generate depth maps. At a time when mobile systems commonly relied on these external hardware attachments or specialized dual-camera arrays to achieve sufficient parallax for 3D reconstruction, software-only passive photogrammetry was often limited by the need for manual calibration steps or physical markers like chessboard patterns. Furthermore, when hardware constraints made real-time processing of high-density point clouds non-trivial, standard engineering practices often struggled to resolve specific object boundaries and dimensions accurately from a single moving passive sensor without pre-existing camera lens parameters.

Prosecution Position

The examiner allowed the application because the claims specify a method for calculating spatial distances between pairs of points within a 3D point cloud to generate basic geometric data. This process specifically produces information regarding edge and boundary points, straight lines, curved boundaries, and both planar and curved surfaces for an object. The examiner noted that while prior art could model buildings from point clouds, it did not teach this specific way of generating primitive geometric information from the spatial distances between point pairs in the cloud.

Claims

This patent contains 14 claims, with claims 1 and 11 being independent. The independent claims are directed to computerized methods for obtaining measurements of an object of interest from 2D images by generating a 3D point cloud and converting spatial distance information into 3D coordinates, with claim 11 adding a measurement accuracy limitation. The dependent claims generally elaborate on and further define the elements and steps recited in the independent claims.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

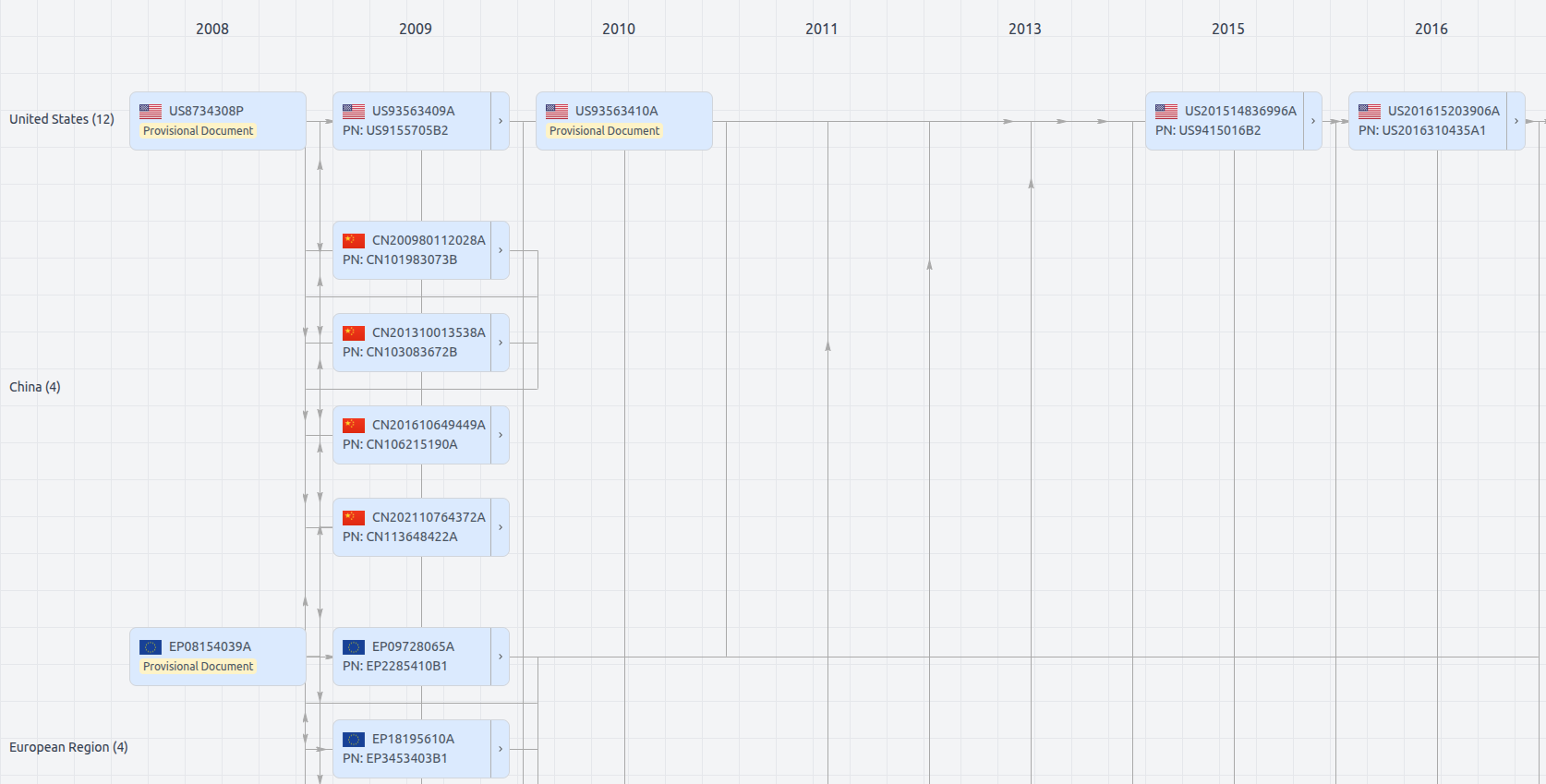

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Get instant alerts for new documents

US9886774

- Application Number

- US15248206A

- Filing Date

- Aug 26, 2016

- Publication Date

- Feb 6, 2018

- External Links

- Slate, USPTO, Google Patents