Systems and methods for extracting information about objects from scene information

Patent No. US9904867 (titled "Systems and methods for extracting information about objects from scene information") on Jan 29, 2017. The application was issued on Feb 27, 2018.

What is this patent about?

'867 is related to the field of computer vision and, more specifically, to object recognition within images and 3D data. The background context involves the challenges of accurately identifying objects in scenes, especially when dealing with arbitrary objects under varying conditions like viewpoint, lighting, and occlusion. Traditional computer-based object recognition often struggles with these complexities, particularly when objects deviate from their canonical appearance.

The underlying idea behind '867 is to enhance object recognition by fusing 2D image data with 3D scene information. This involves generating 2D image information from overlapping images of a scene and combining it with 3D data representing the same scene. By integrating these two sources of information, the system can leverage the strengths of both to improve object detection, measurement, and labeling.

The claims of '867 focus on a method for generating information about objects in a scene. This involves providing overlapping 2D images, selecting an object of interest, generating 2D image information, providing 3D information, and then generating projective geometry information by combining the 2D and 3D data. The method also includes performing image segmentation and 3D data clustering, followed by cross-validation to establish relationships between 3D data points and image elements.

In practice, the invention uses a combination of image processing and 3D data analysis techniques. Overlapping 2D images are captured using a passive image capture device, and 3D information is generated, potentially from the same images. The 2D image information is segmented to identify regions of interest, while the 3D data is clustered to group related points. These segmented and clustered data sets are then cross-validated using projective geometry to establish relationships between the 2D and 3D representations of the scene.

This approach differentiates itself from prior methods that rely solely on 2D image analysis or 3D data. By combining these two sources of information, the invention can overcome limitations of each individual approach. For example, the 3D data provides geometric context that is missing in 2D images, while the 2D images provide texture and color information that is not available in raw 3D point clouds. This fusion of information leads to more robust and accurate object recognition, measurement, and labeling.

How does this patent fit in bigger picture?

Technical Landscape

In the mid-2010s when ’867 was filed, computer vision for object recognition was typically implemented using machine learning algorithms that relied on either positive and negative training sets or 2D image features like color and texture. At a time when systems commonly relied on 2D image data for classification rather than integrated 3D spatial context, extracting precise physical measurements or topological relationships between arbitrary objects in uncontrolled environments was non-trivial. Hardware and software constraints often limited the accuracy of automated identification when objects deviated from canonical poses or were captured under variable lighting, making the fusion of 3D point clouds with 2D segmentation a complex engineering challenge for real-time applications.

Prosecution Position

The examiner allowed the application because the prior art did not demonstrate the specific combination of cross-validation and iterative cross-referencing steps described in the claims. While existing methods used bundle adjustment to minimize errors between camera positions and 3D points, they did not perform cross-validation on segmented 2D image data by processing it alongside combined 2D and 3D information. Furthermore, the examiner noted that the prior art failed to teach the process of iteratively cross-referencing projective geometry data, clustered 3D points, and segmented 2D images to validate the object information.

Claims

This patent contains 22 claims, with claims 1 and 18 being independent. The independent claims are directed to methods of generating information about objects in a scene by combining 2D image information and 3D information. The dependent claims generally elaborate on and refine the methods described in the independent claims, adding details regarding measurement, labeling, topology, semantic information, and specific devices used.

Key Claim Terms New

Definitions of key terms used in the patent claims.

Litigation Cases New

US Latest litigation cases involving this patent.

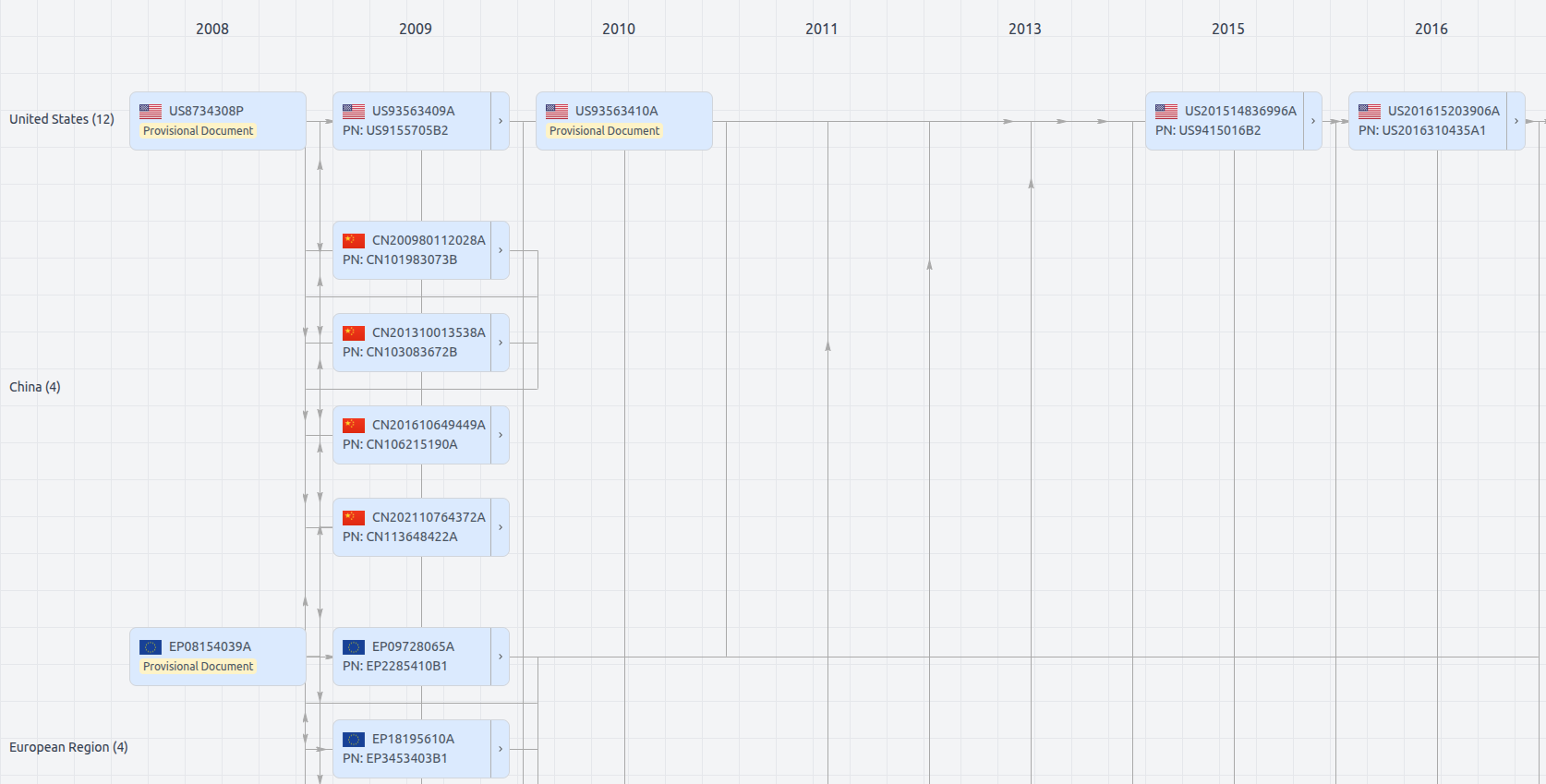

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Get instant alerts for new documents

US9904867

- Application Number

- US15418741A

- Filing Date

- Jan 29, 2017

- Publication Date

- Feb 27, 2018

- External Links

- Slate, USPTO, Google Patents