Object Direction Using Video Input Combined With Tilt Angle Information

Patent No. USRE48417 (titled "Object Direction Using Video Input Combined With Tilt Angle Information") was filed by Sony Interactive Entertainment Inc on Jul 14, 2016.

What is this patent about?

’417 is related to the field of interactive computer entertainment systems, specifically methods and apparatuses for obtaining input data from an object, such as a game controller. Traditional game controllers often rely on button presses and joystick movements. This patent addresses the problem of enhancing user interaction by incorporating the controller's spatial orientation and movement into the control scheme.

The underlying idea behind ’417 is to improve the accuracy and efficiency of object tracking by fusing data from multiple sensors. Specifically, it uses tilt angle information from an inertial sensor (like an accelerometer or gyroscope) to guide the image processing of a video camera. This allows the system to predict the orientation of the object, reducing the computational burden of searching through all possible orientations during image recognition.

The claims of ’417 focus on a method, apparatus, and storage medium for obtaining input data from an object. The core process involves capturing a live image of the object, receiving tilt angle information from a non-image sensor, using this tilt information to generate a rotated reference image , comparing the live image to the rotated reference image, and generating an indication when a match is found.

In practice, the invention works by first capturing a live video feed of the controller. Simultaneously, an accelerometer within the controller measures its tilt angle. This tilt angle is then used to select or generate a reference image that is pre-rotated to match the controller's current orientation. The system then compares the live image to this rotated reference image, looking for a match. When a match is found, it indicates the controller's position and orientation, which can then be translated into game commands.

This approach differs from prior art that relies solely on image processing or inertial sensors. Image-only systems are computationally expensive because they must search through all possible orientations. Inertial-only systems suffer from drift, accumulating errors over time. By combining these two sources of information, ’417 achieves a more robust and efficient tracking system. The fusion of inertial and visual data allows for faster and more accurate object tracking, leading to a more responsive and immersive gaming experience.

How does this patent fit in bigger picture?

Technical landscape at the time

In the mid-2000s when ’417 was filed, at a time when motion capture for gaming was typically implemented using dedicated camera systems or specialized controllers, systems commonly relied on accelerometers or gyroscopes for orientation data rather than fusing it with video input. Hardware or software constraints made real-time image processing and sensor fusion non-trivial.

Novelty and Inventive Step

The examiner approved the application because the prior art did not teach or suggest using tilt angle or motion information from a sensor other than the image capture device to rotate a reference image, comparing the live image with the rotated reference image, and generating an indication when they match.

Claims

This patent contains 43 claims, of which claims 1, 11, 20, 30, and 39 are independent. The independent claims generally focus on methods, apparatuses, and storage mediums for obtaining input data from an object or tracking a device using image capture and sensor data, particularly tilt angle information. The dependent claims generally elaborate on the specific components, features, and steps of the methods, apparatuses, and storage mediums described in the independent claims.

Key Claim Terms New

Definitions of key terms used in the patent claims.

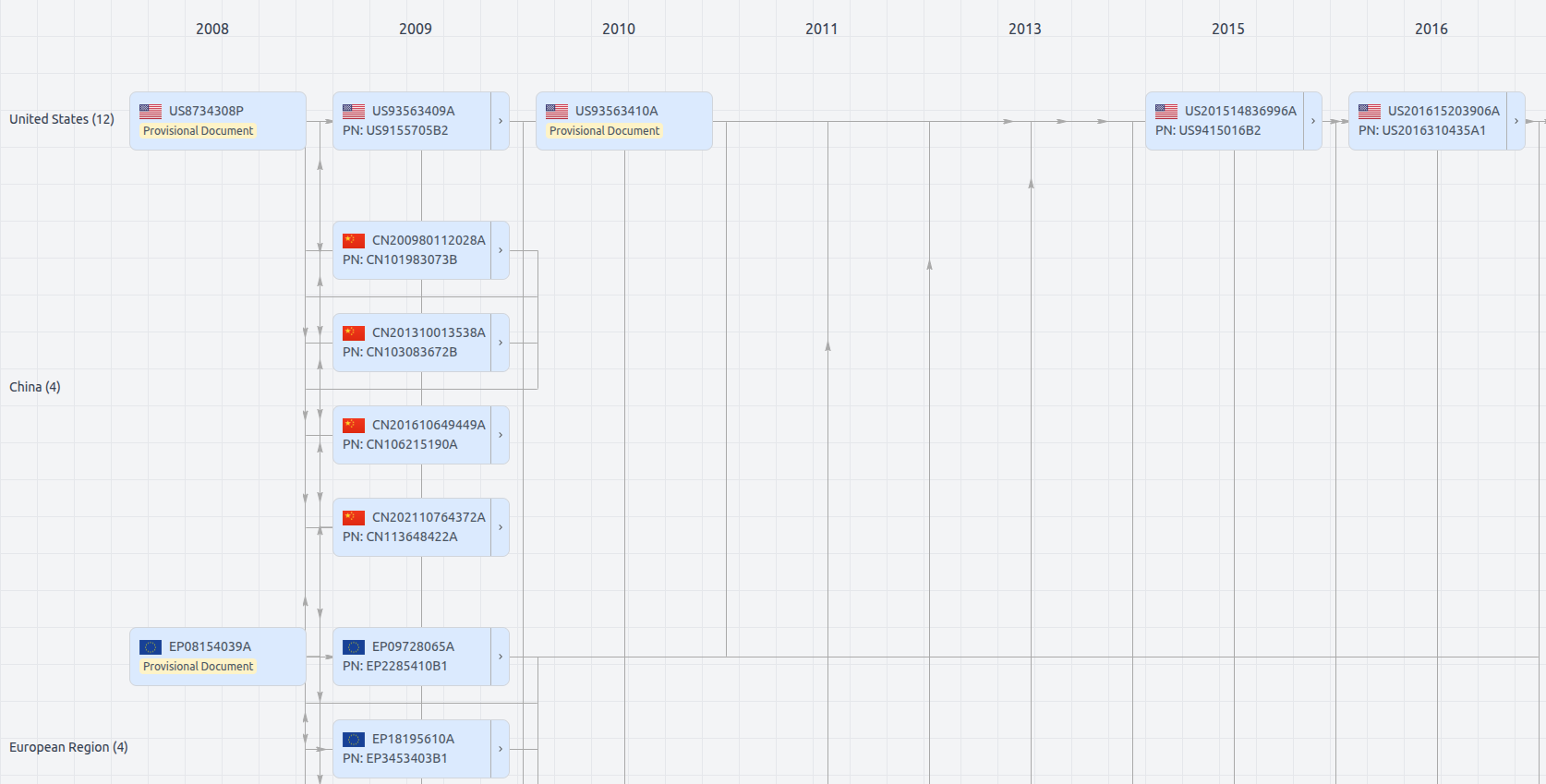

Patent Family

File Wrapper

The dossier documents provide a comprehensive record of the patent's prosecution history - including filings, correspondence, and decisions made by patent offices - and are crucial for understanding the patent's legal journey and any challenges it may have faced during examination.

Get instant alerts for new documents

USRE48417

- Application Number

- US15210816

- Filing Date

- Jul 14, 2016

- Status

- Granted

- Expiry Date

- Sep 28, 2026

- External Links

- Slate, USPTO, Google Patents